Today's picks

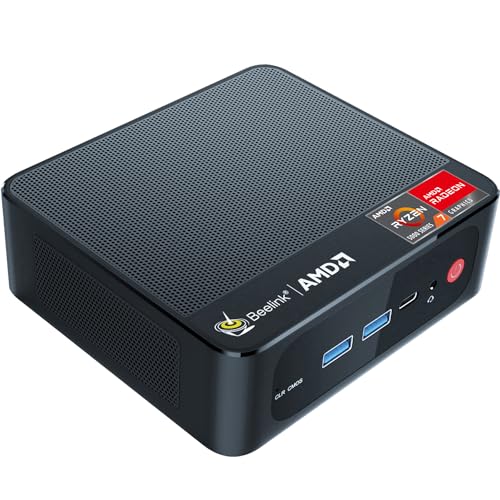

Beelink SER5 Mini Pc,AMD Ryzen 7 5825U PRO(8C/16T,up to 4.5GHz),Mini Computer with 16GB DDR4 RAM/500GB M.2 2280 SSD,Micro Pc Support 4K FPS,WiFi6/BT5.2/2.5G LAN/Home/Office Support Win 11 Pro

Affiliate

$359.00

Learning to code or programming

Programming

117.0K views

2 years ago

GearScouts.com

Sponsored

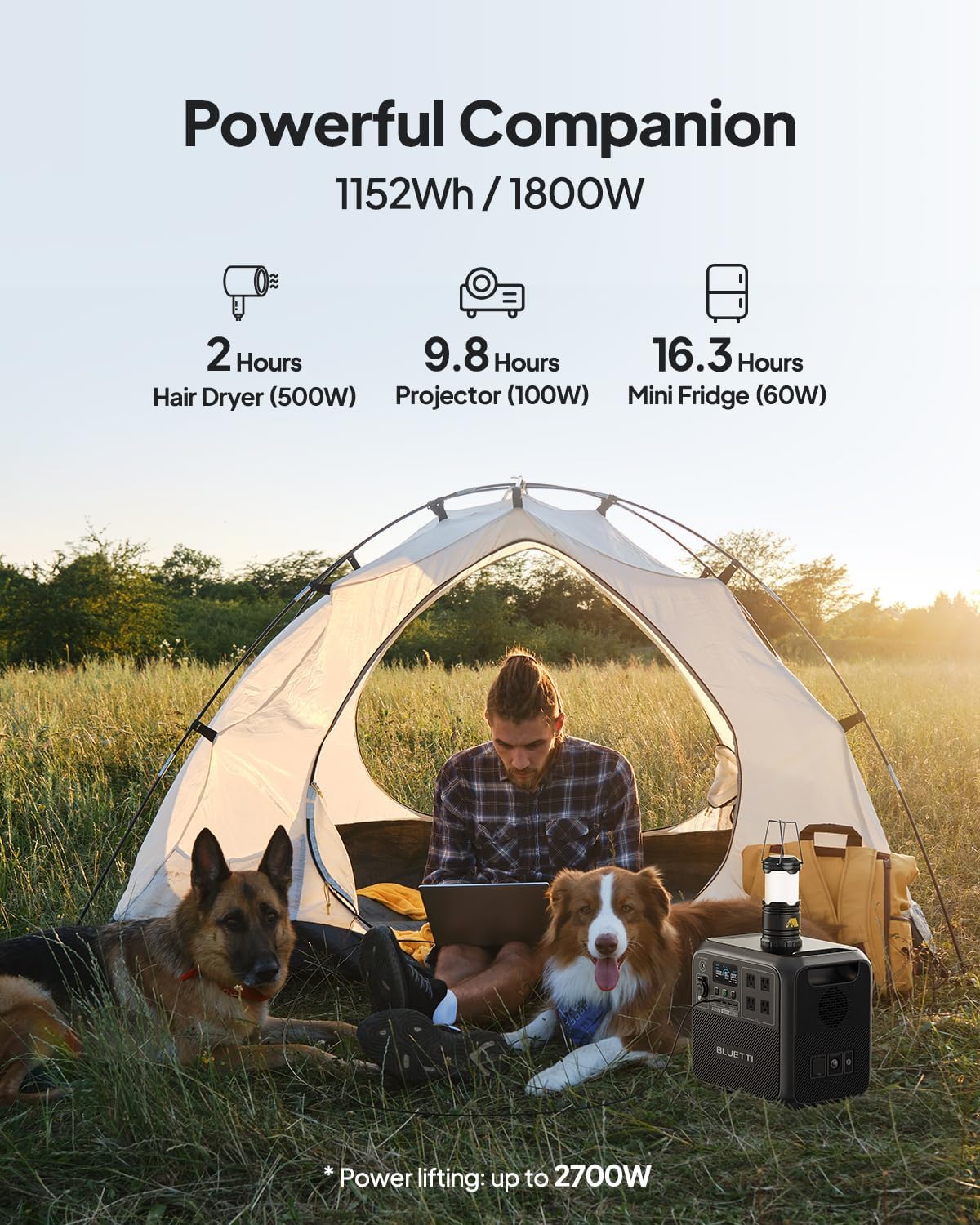

Power stations

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++