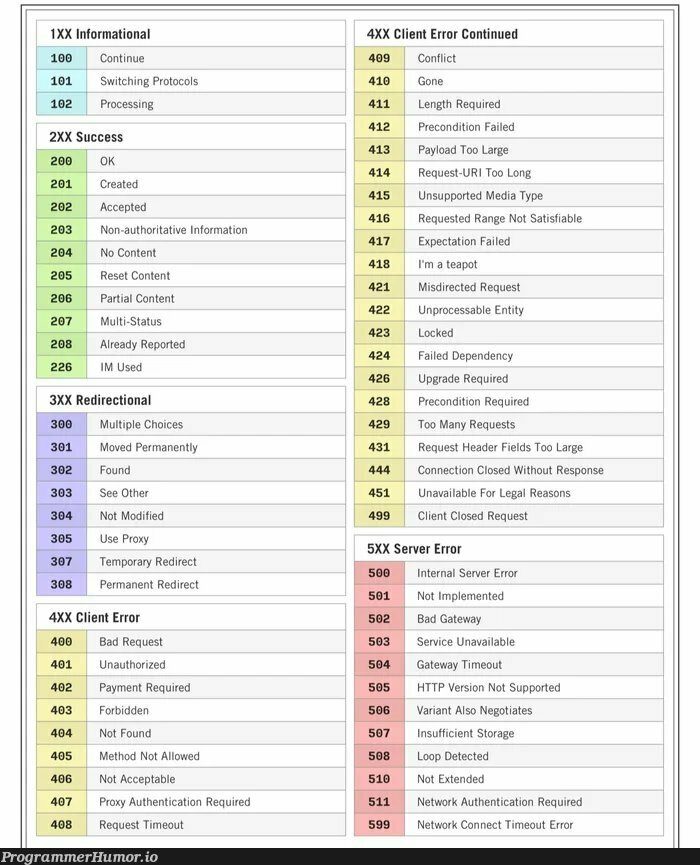

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

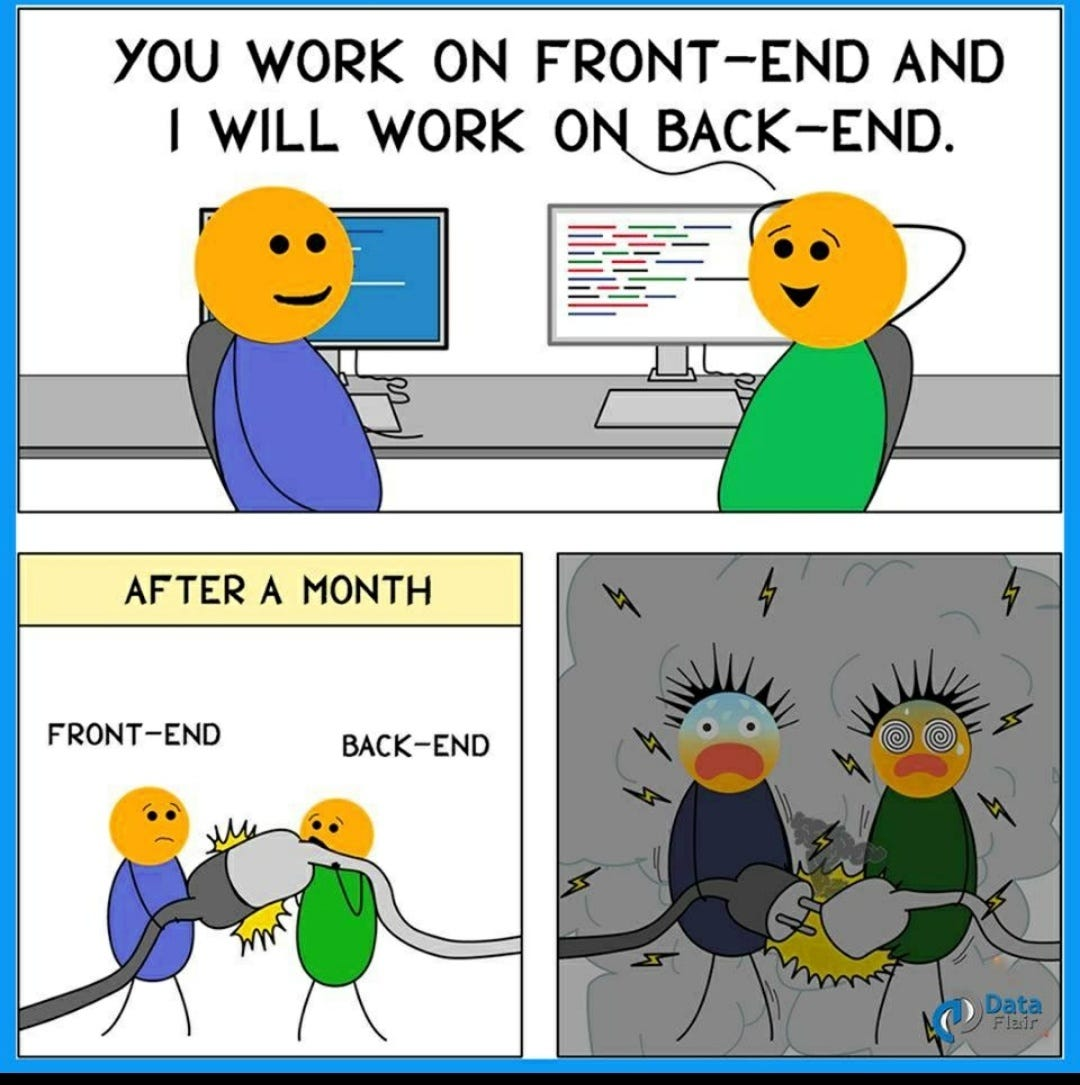

Backend Memes

Backend development: where you do all the real work while the frontend devs argue about button colors for three days. These memes are for the unsung heroes working in the shadows, crafting APIs and database schemas that nobody appreciates until they break. We've all experienced those special moments – like when your microservices aren't so 'micro' anymore, or when that quick hotfix at 2 AM somehow keeps the whole system running for years. Backend devs are a different breed – we get excited about response times in milliseconds and dream in database schemas. If you've ever had to explain why that 'simple feature' requires rebuilding the entire architecture, these memes will feel like a warm, serverless hug.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++