HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

AI Memes

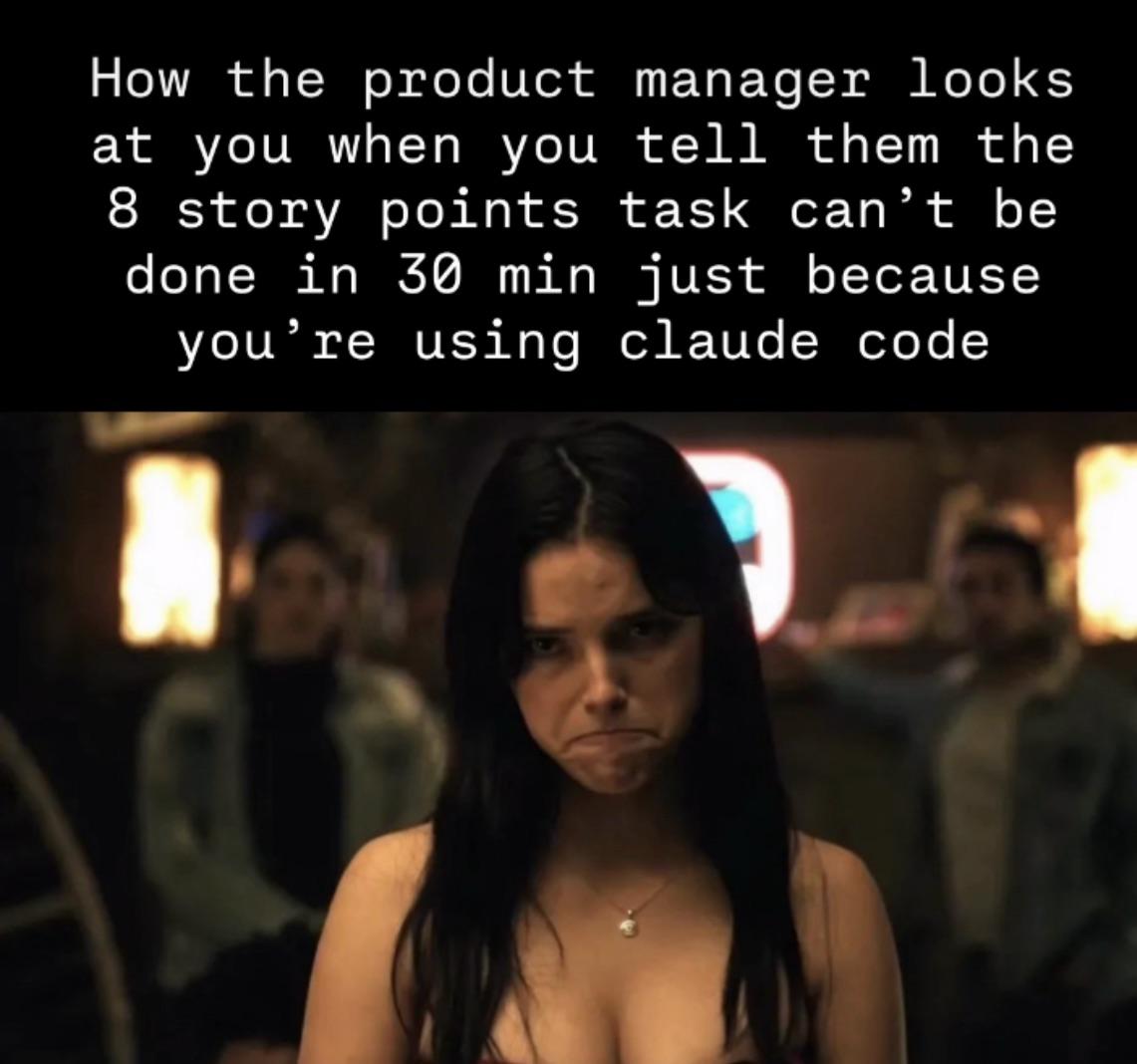

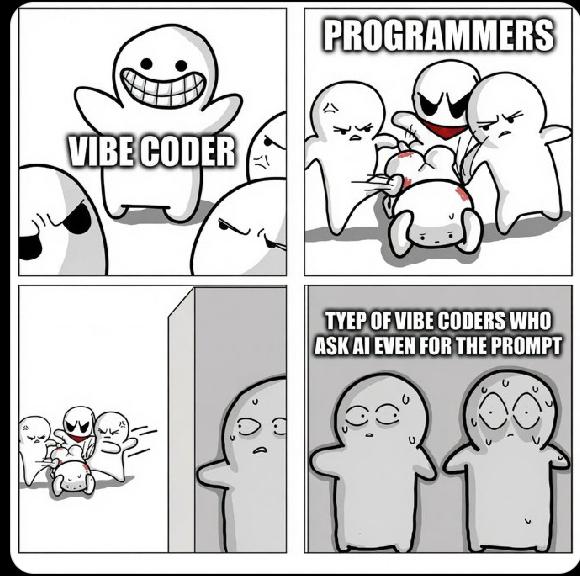

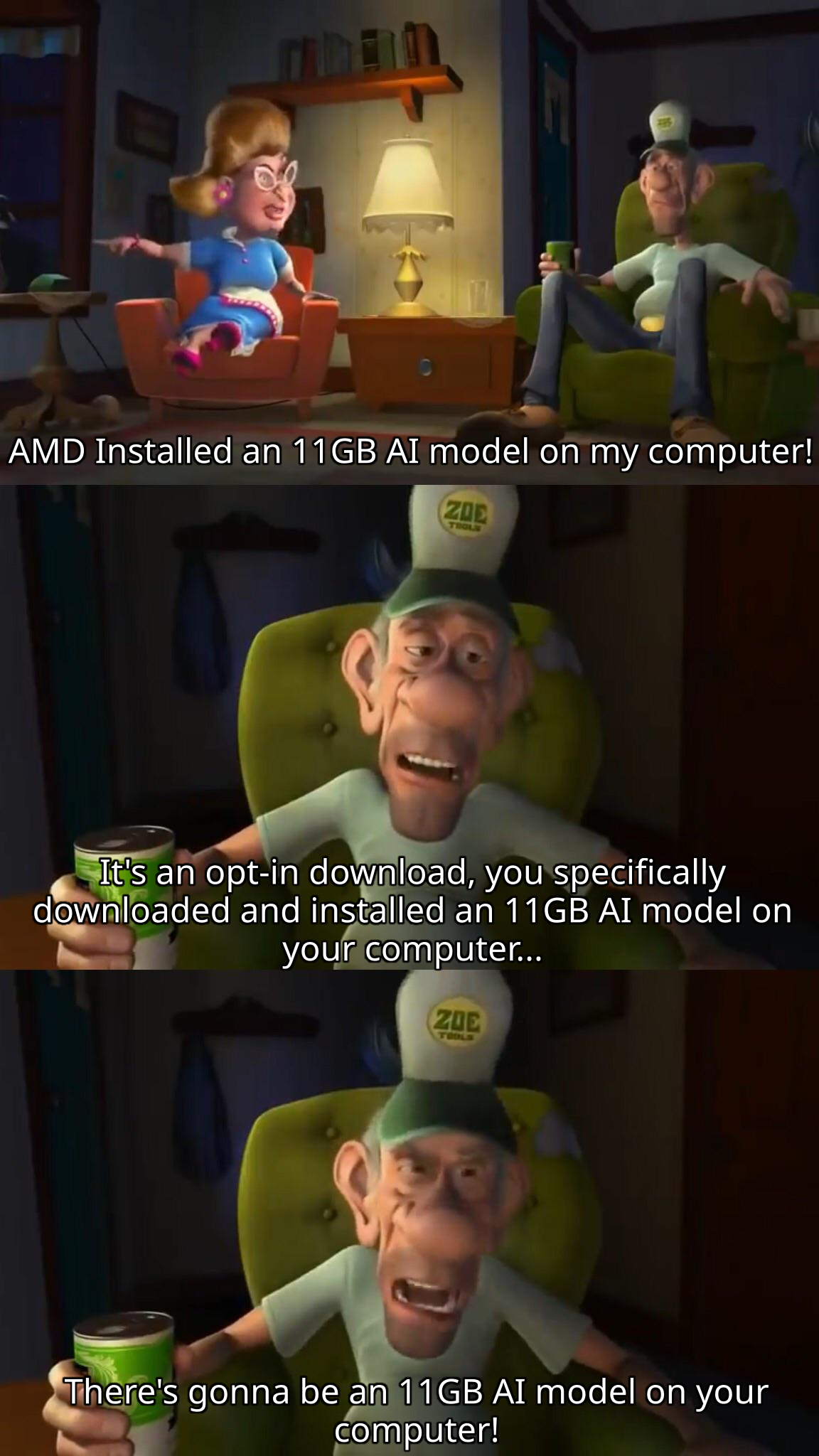

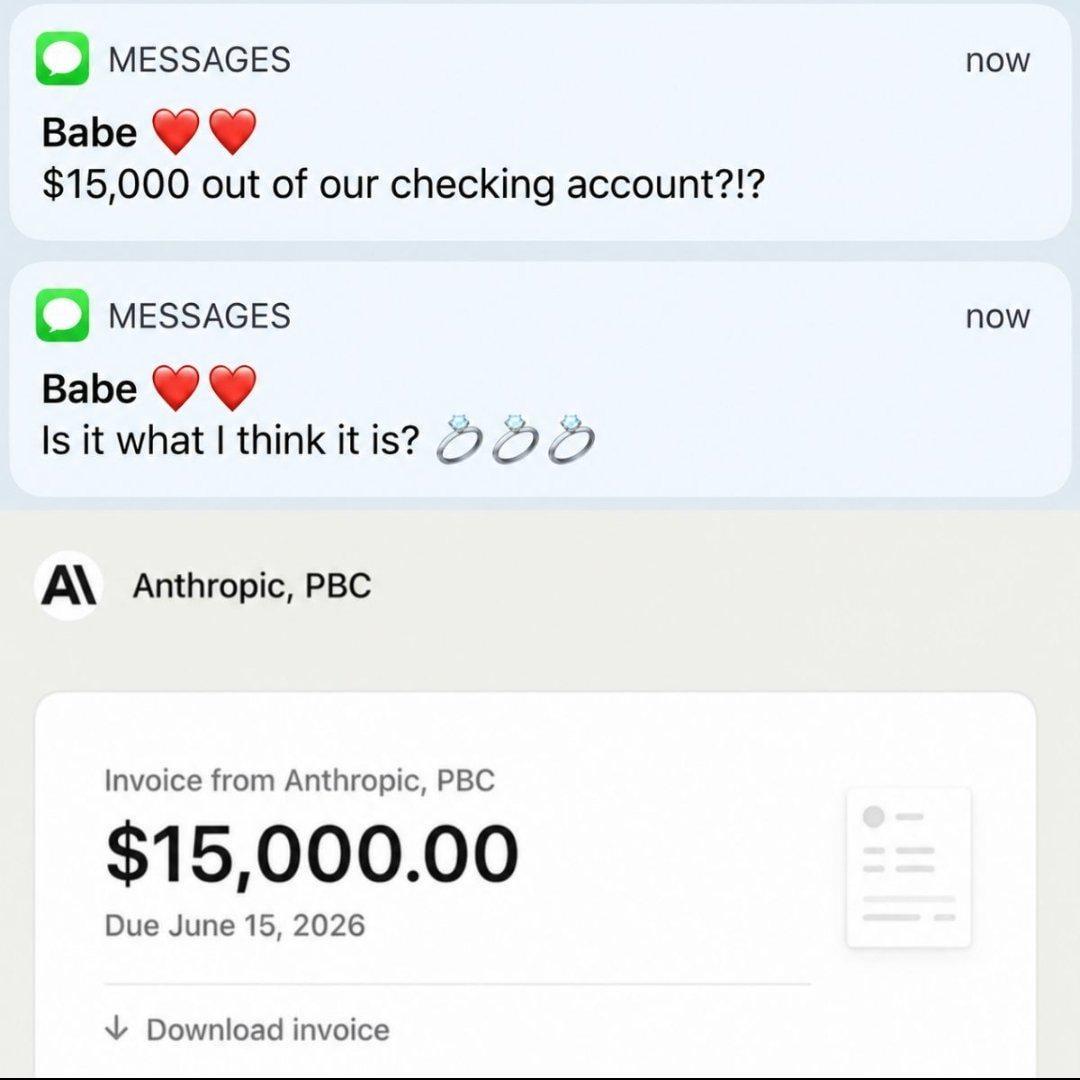

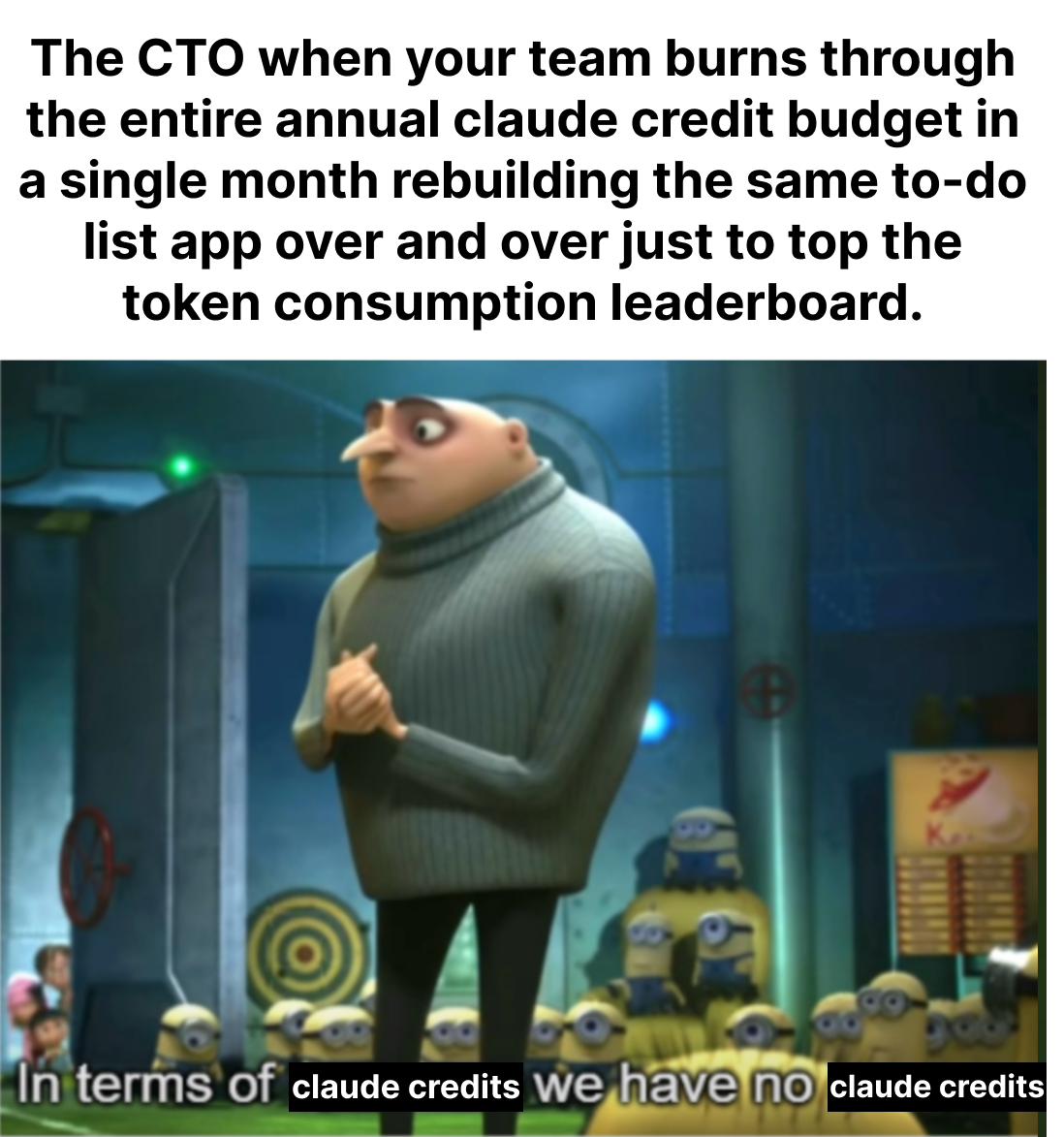

AI: where machines are learning to think while developers are learning to prompt. From frustrating hallucinations to the rise of Vibe Coding, these memes are for everyone who's spent hours crafting the perfect prompt only to get "As an AI language model, I cannot..." in response. We've all been there – telling an AI "make me a to-do app" at 2 AM instead of writing actual code, then spending the next three hours debugging what it hallucinated. Vibe Coding has turned us all into professional AI whisperers, where success depends more on your prompt game than your actual coding skills. "It's not a bug, it's a prompt engineering opportunity!"

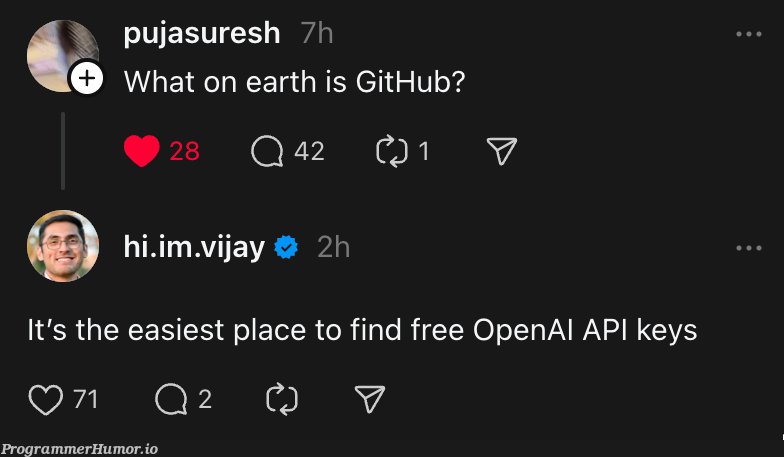

Remember when we used to actually write for loops? Now we're just vibing with AI, dropping vague requirements like "make it prettier" and "you know what I mean" while the AI pretends to understand. We're explaining to non-tech friends that no, ChatGPT isn't actually sentient (we think?), and desperately fine-tuning models that still can't remember context from two paragraphs ago but somehow remember that one obscure Reddit post from 2012.

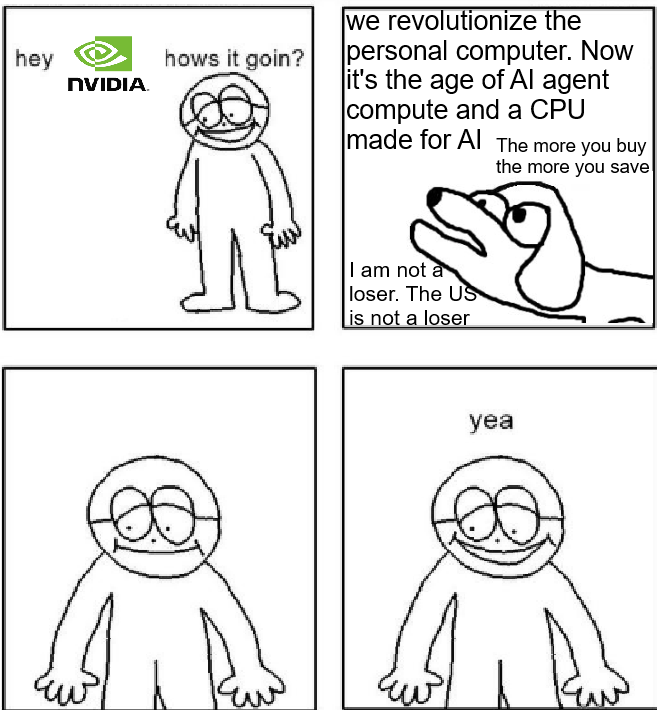

Whether you're a Vibe Coding enthusiast turning three emojis and "kinda like Airbnb but for dogs" into functional software, a prompt engineer (yeah, that's a real job now and no, my parents still don't get what I do either), an ML researcher with a GPU bill higher than your rent, or just someone who's watched Claude completely make up citations with Harvard-level confidence, these memes capture the beautiful chaos of teaching computers to be almost as smart as they think they are.

Join us as we document this bizarre timeline where juniors are Vibe Coding their way through interviews, seniors are questioning their life choices, and we're all just trying to figure out if we're teaching AI or if AI is teaching us. From GPT-4's occasional brilliance to Grok's edgy teenage phase, we're all just vibing in this uncanny valley together. And yeah, I definitely asked an AI to help write this description – how meta is that? Honestly, at this point I'm not even sure which parts I wrote anymore lol.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++