HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

C++ Memes

C++: where you can shoot yourself in the foot, then reload and do it again with operator overloading. These memes celebrate the language that gives you enough power to build operating systems and enough complexity to ensure job security for decades. If you've ever battled template metaprogramming, spent hours debugging memory leaks, or explained to management why rewriting that legacy C++ codebase would take years not months, you'll find your digital support group here. From the special horror of linking errors to the indescribable satisfaction of perfectly optimized code, this collection honors the language that somehow manages to be both low-level and impossibly abstract at the same time.

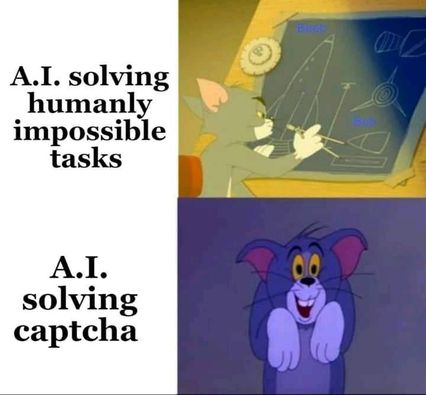

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++