Today's picks

USB C KVM Switch 4K@60Hz,Dual Monitor USB C HDMI KVM Switch for 1 PC and 1 Laptop Sharing 2 Monitor and 4 USB3.0 Devices, PD Power for Laptop with Wired Remote Control and USB Cables Included

Affiliate

$105.99

Sideproject Always Comes First

Programming

338.5K views

1 year ago

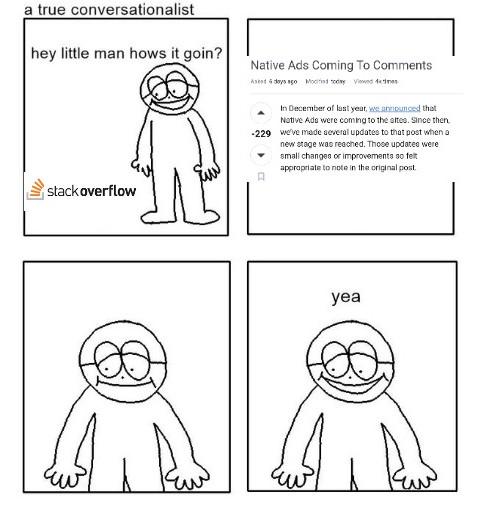

Dependency Despair

Javascript

127.3K views

1 year ago

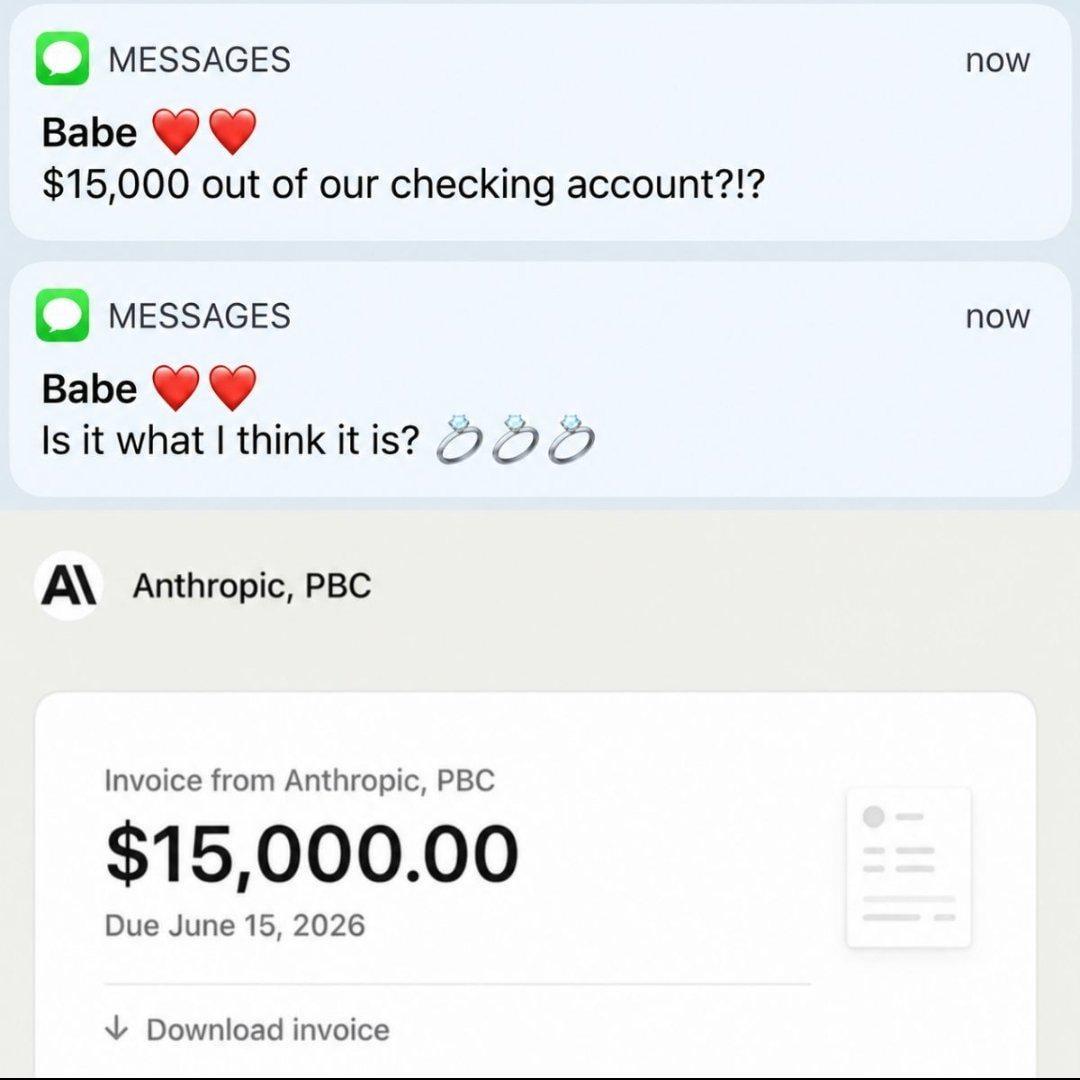

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++