HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

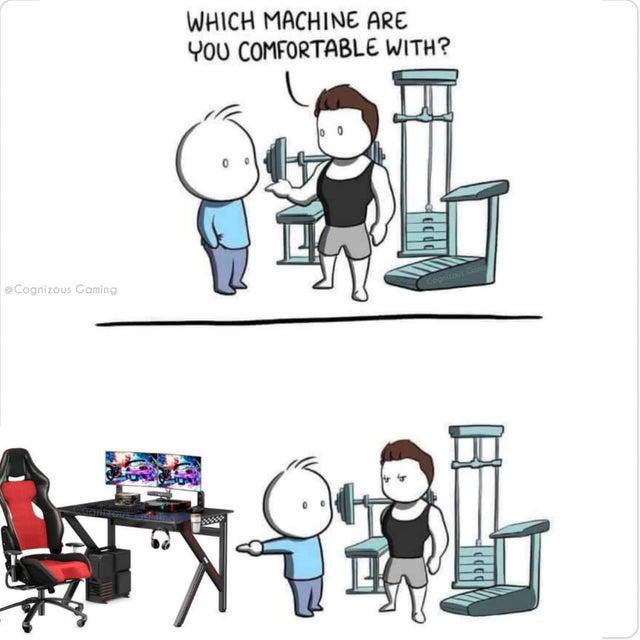

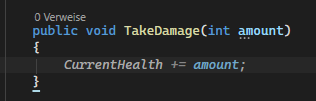

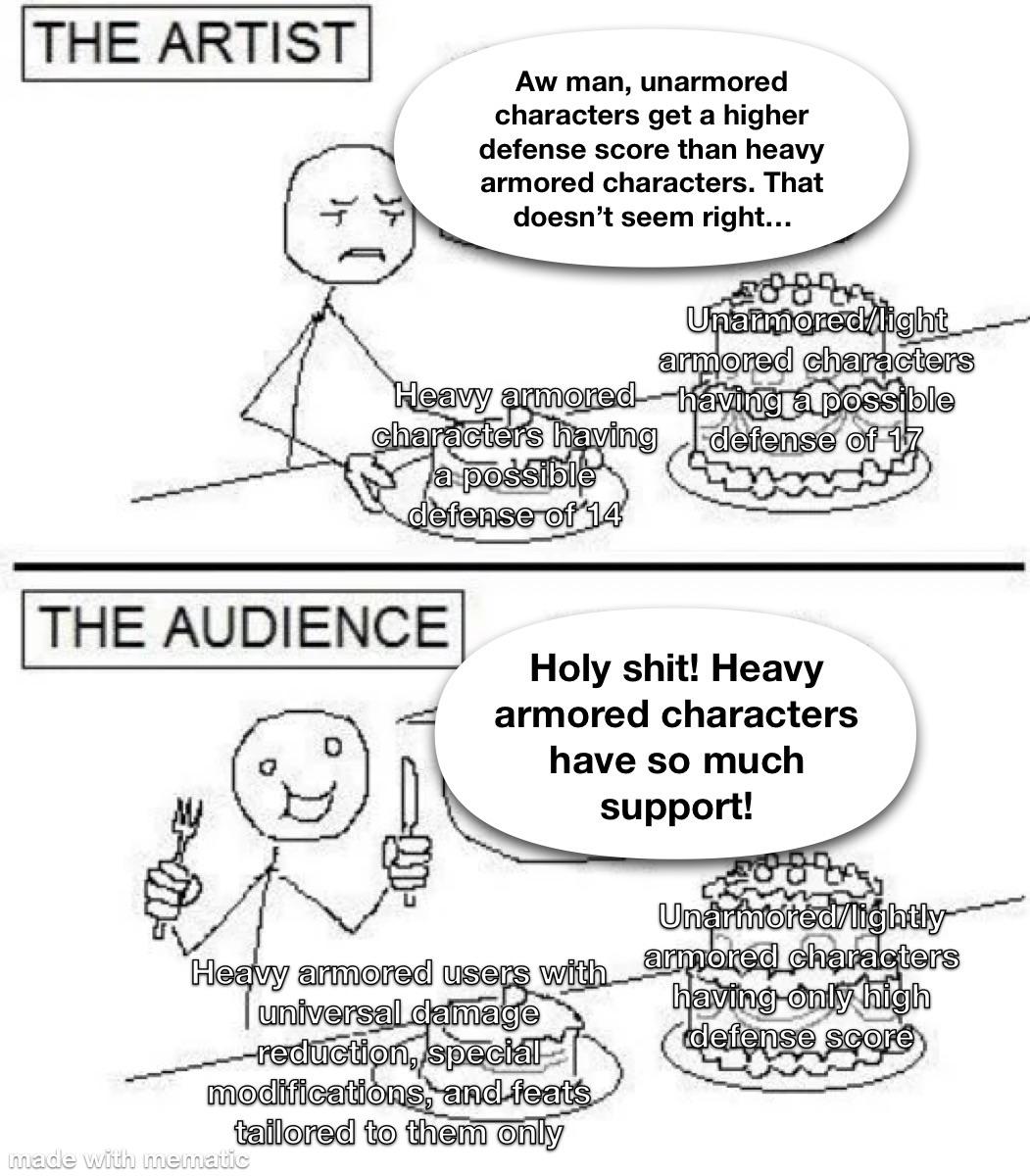

Gamedev Memes

Game Development: where "it's just a small indie project" turns into three years of your life and counting. These memes celebrate the unique intersection of art, programming, design, and masochism that is creating interactive entertainment. If you've ever implemented physics only to watch your character clip through the floor, optimized rendering to gain 2 FPS, or explained to friends that no, you can't just "make a quick MMO," you'll find your people here. From the special horror of scope creep in passion projects to the indescribable joy of watching someone genuinely enjoy your game, this collection captures the rollercoaster that is turning imagination into playable reality.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++