HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

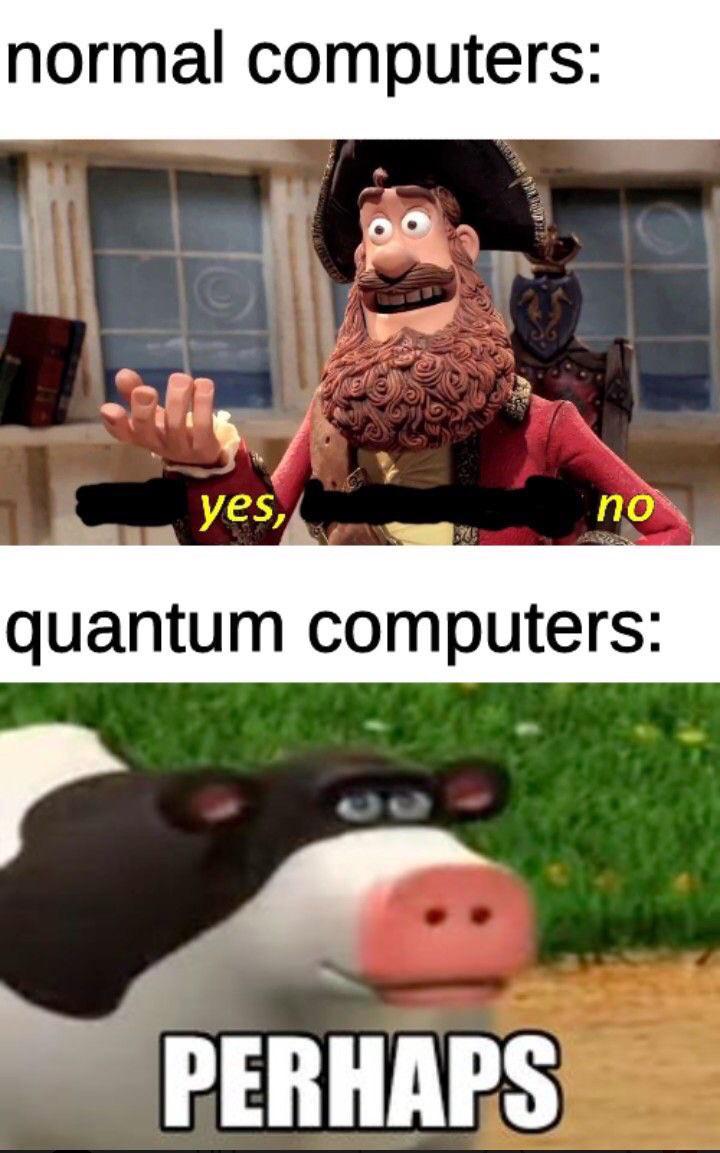

Hardware Memes

Hardware: where software engineers go to discover that physical objects don't have ctrl+z. These memes celebrate the world of tangible computing, from the satisfaction of a perfect cable management setup to the horror of static electricity at exactly the wrong moment. If you've ever upgraded a PC only to create new bottlenecks, explained to non-technical people why more RAM won't fix their internet speed, or developed an emotional attachment to a specific keyboard, you'll find your tribe here. From the endless debate between PC and Mac to the special joy of finally affording that GPU you've been eyeing for months, this collection captures the unique blend of precision and chaos that is hardware.

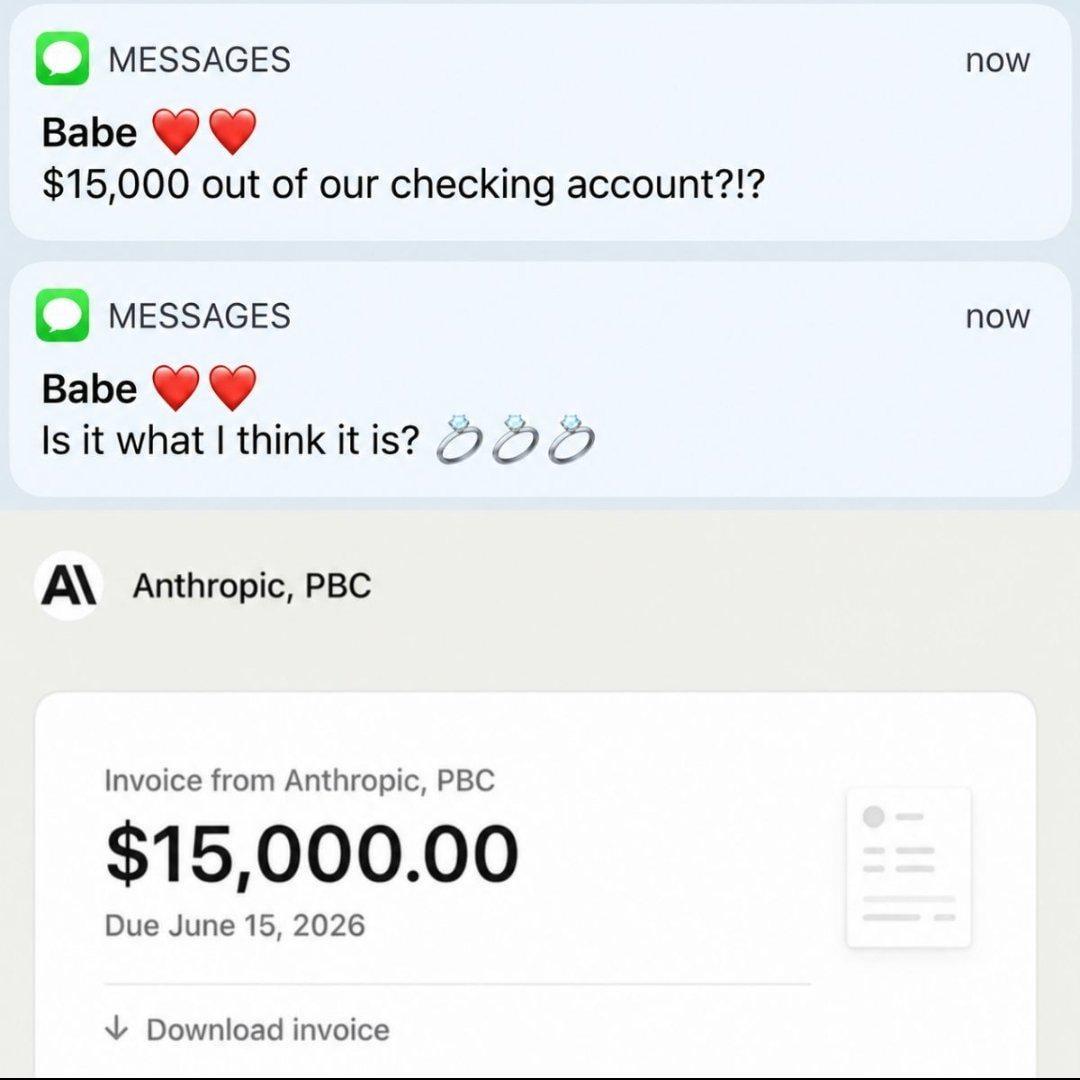

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

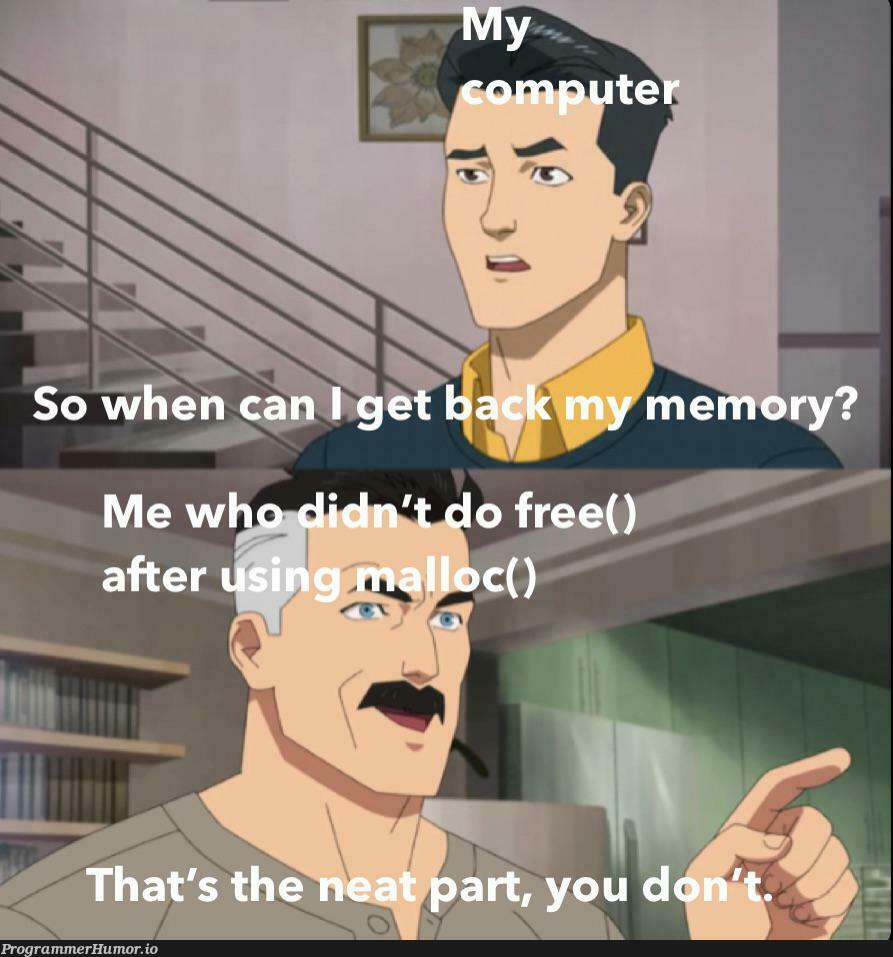

C++

C++