HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

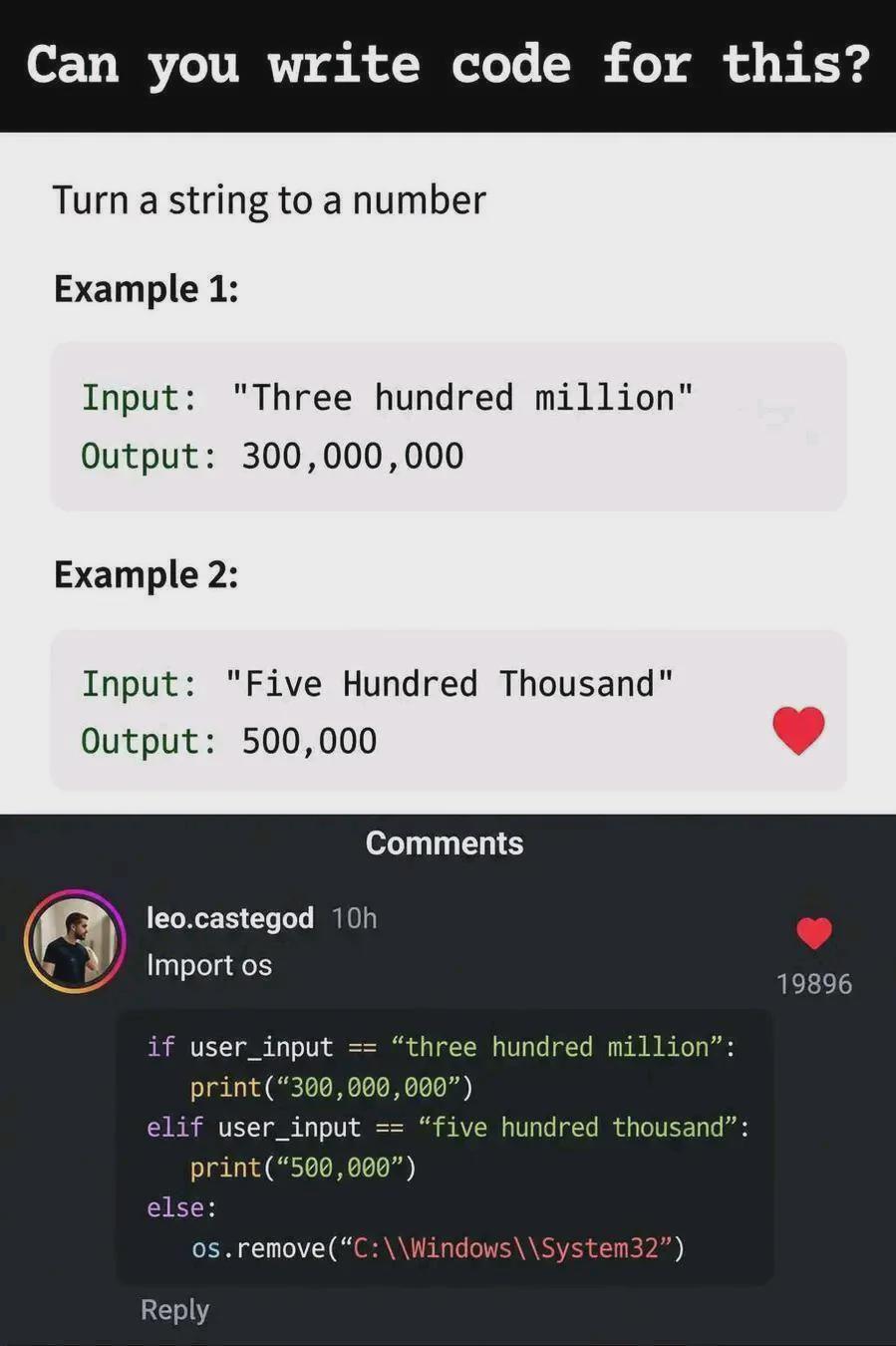

Python Memes

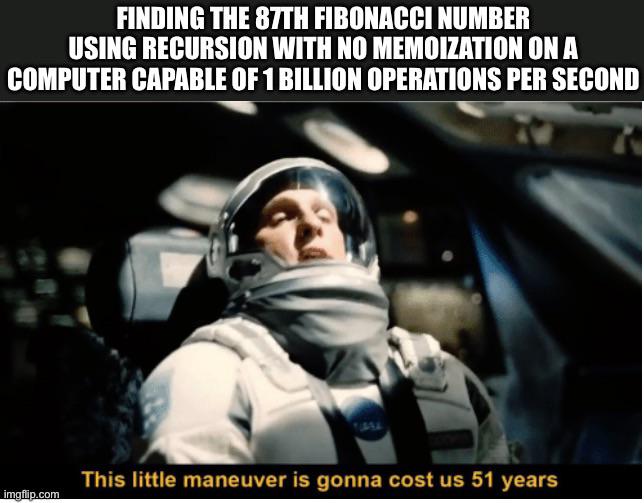

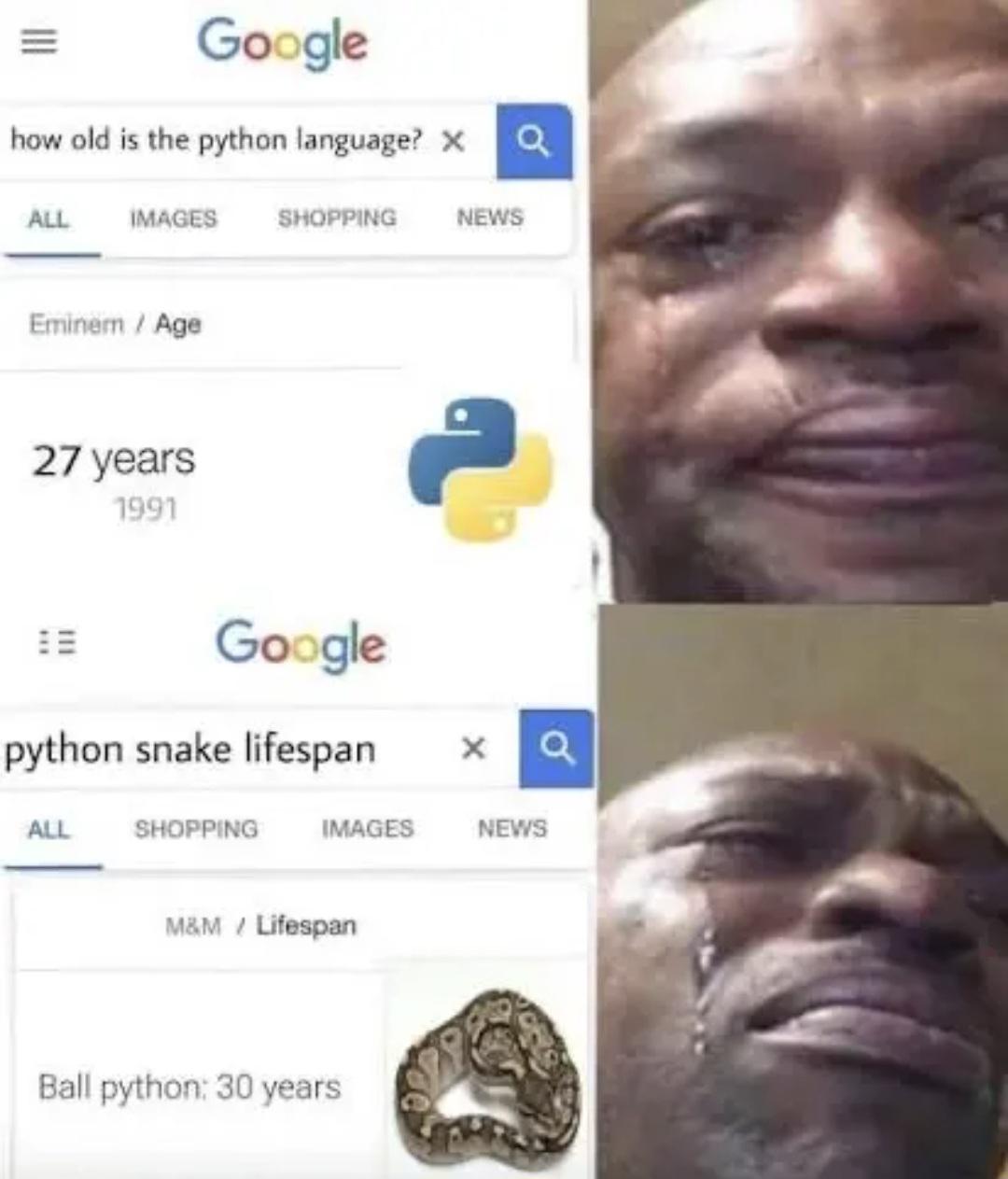

Python: the only language where whitespace can break your code and somehow that's a feature, not a bug. These memes are for everyone who's felt the unique joy of writing what looks like pseudocode and watching it actually run. Or the special frustration of environment hell – 'it works on my machine' takes on a whole new meaning when virtual environments enter the chat. Whether you're a data scientist waiting for your model to train or a web dev explaining why Python isn't actually slow (it's just... thoughtful), these memes will hit harder than an unexpected IndentationError.

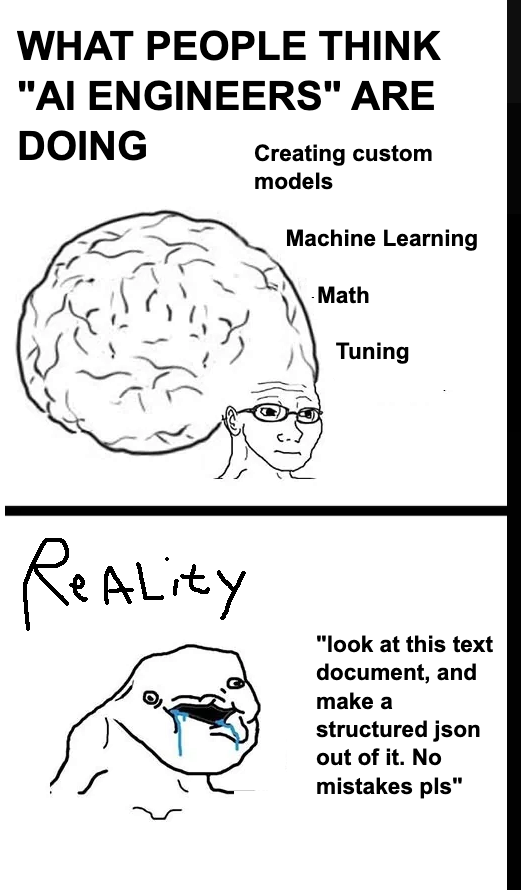

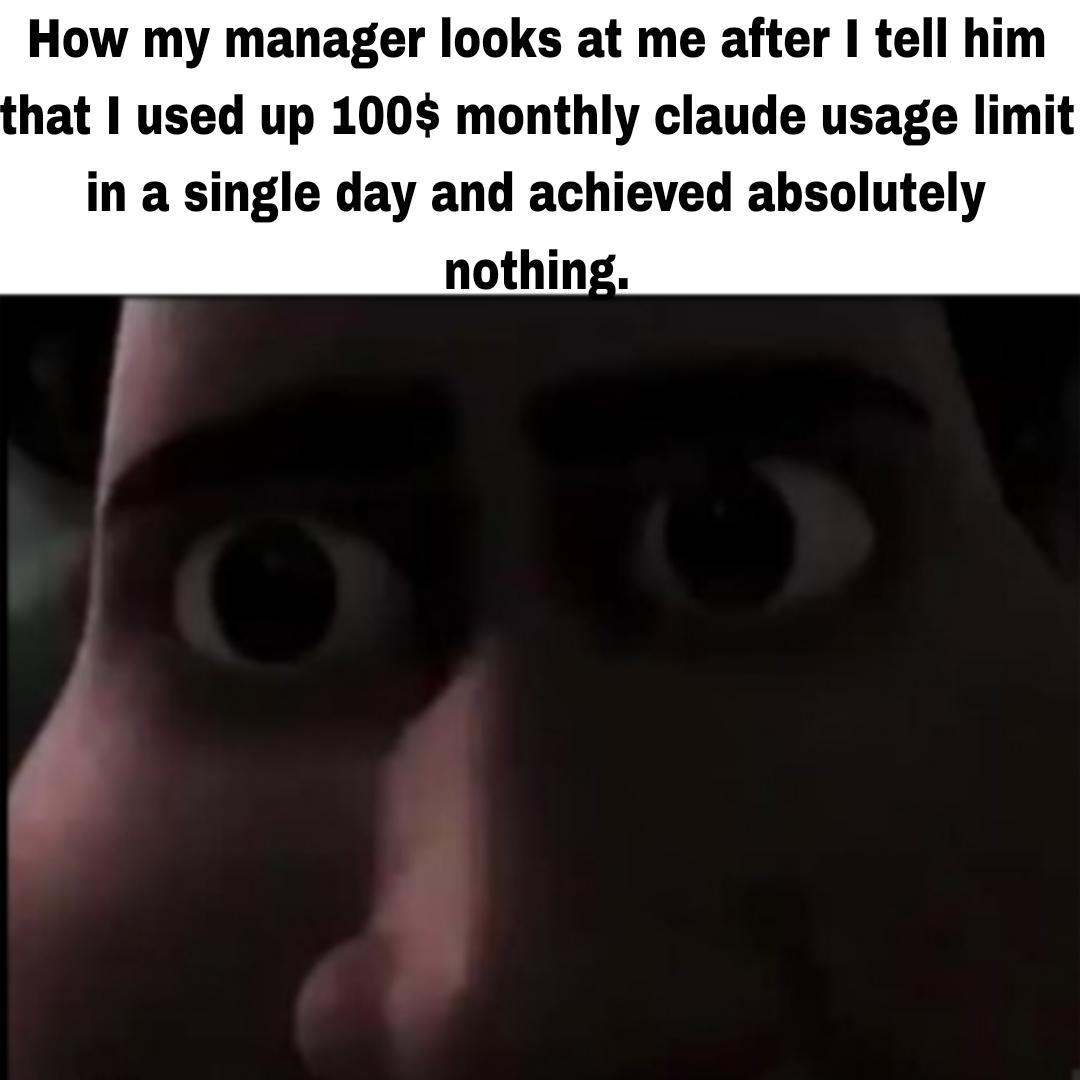

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++