Today's picks

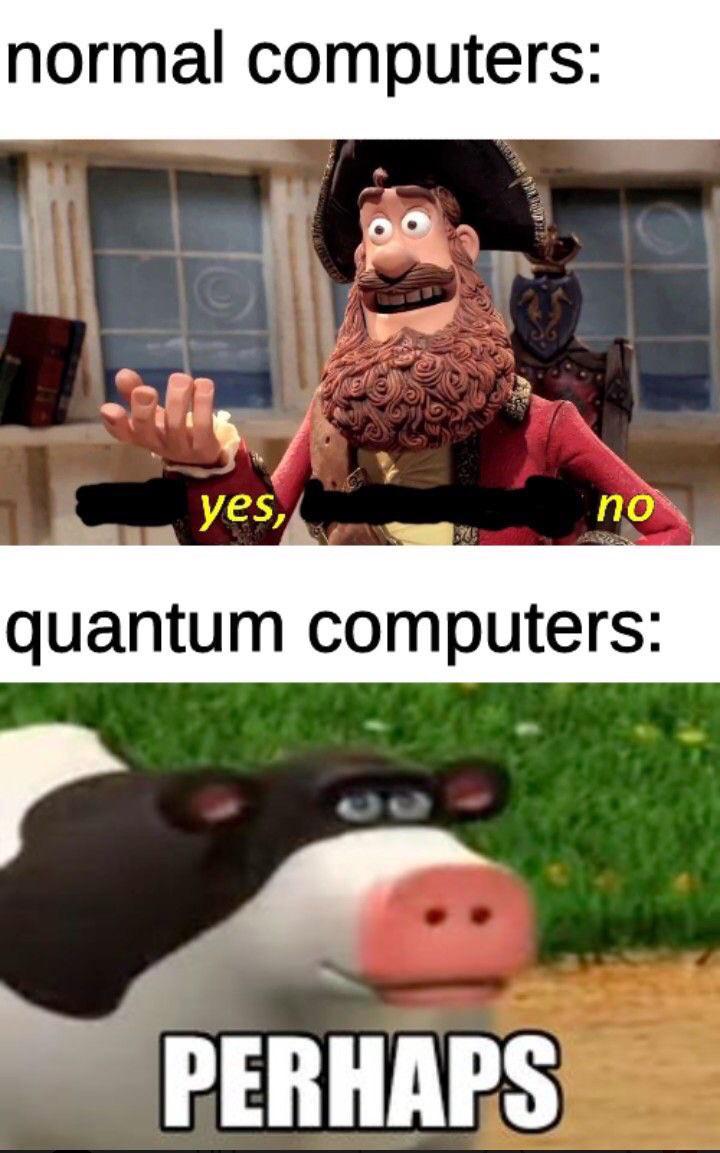

Maybe Maybe Not

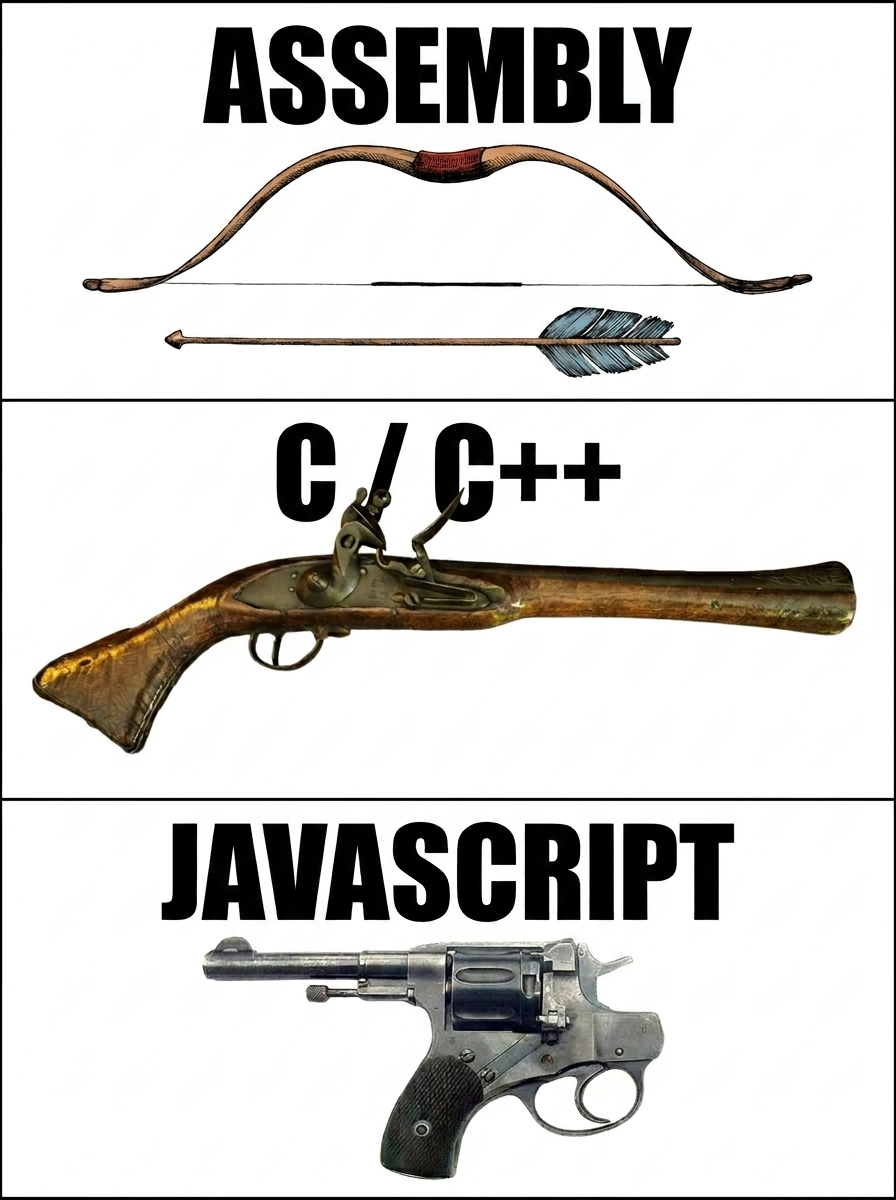

Programming

23.1M views

1 day ago

FIFINE USB/XLR Dynamic Microphone for Podcast Recording, PC Computer Gaming Streaming Mic with RGB Light, Mute Button, Headphones Jack, Desktop Stand, Vocal Mic for Singing YouTube-AmpliGame AM8

Affiliate

$54.99

I always forget the correct syntax

Javascript

96.9K views

3 years ago

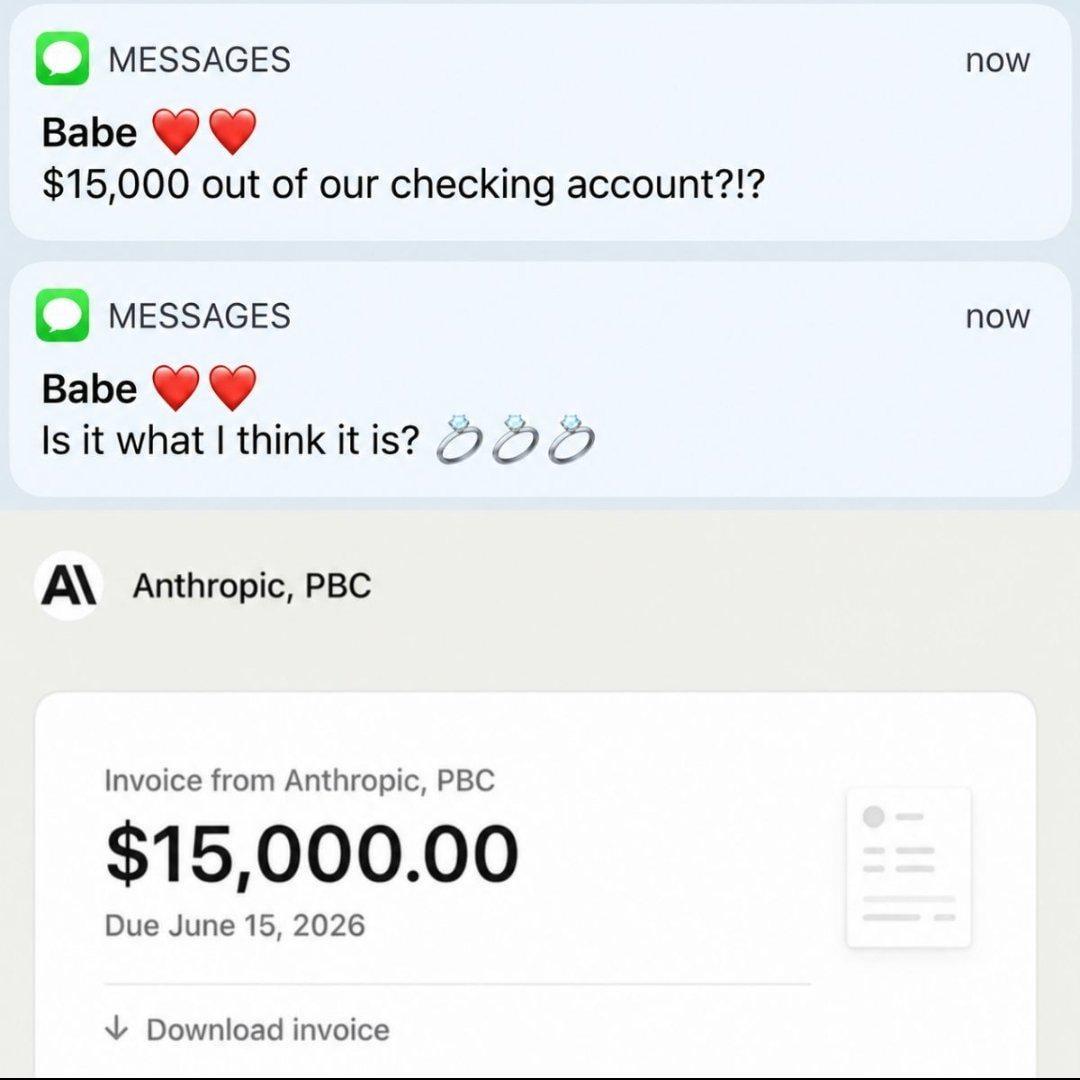

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++