HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

HTTP 418: I'm a teapot

The server identifies as a teapot now and is on a tea break, brb

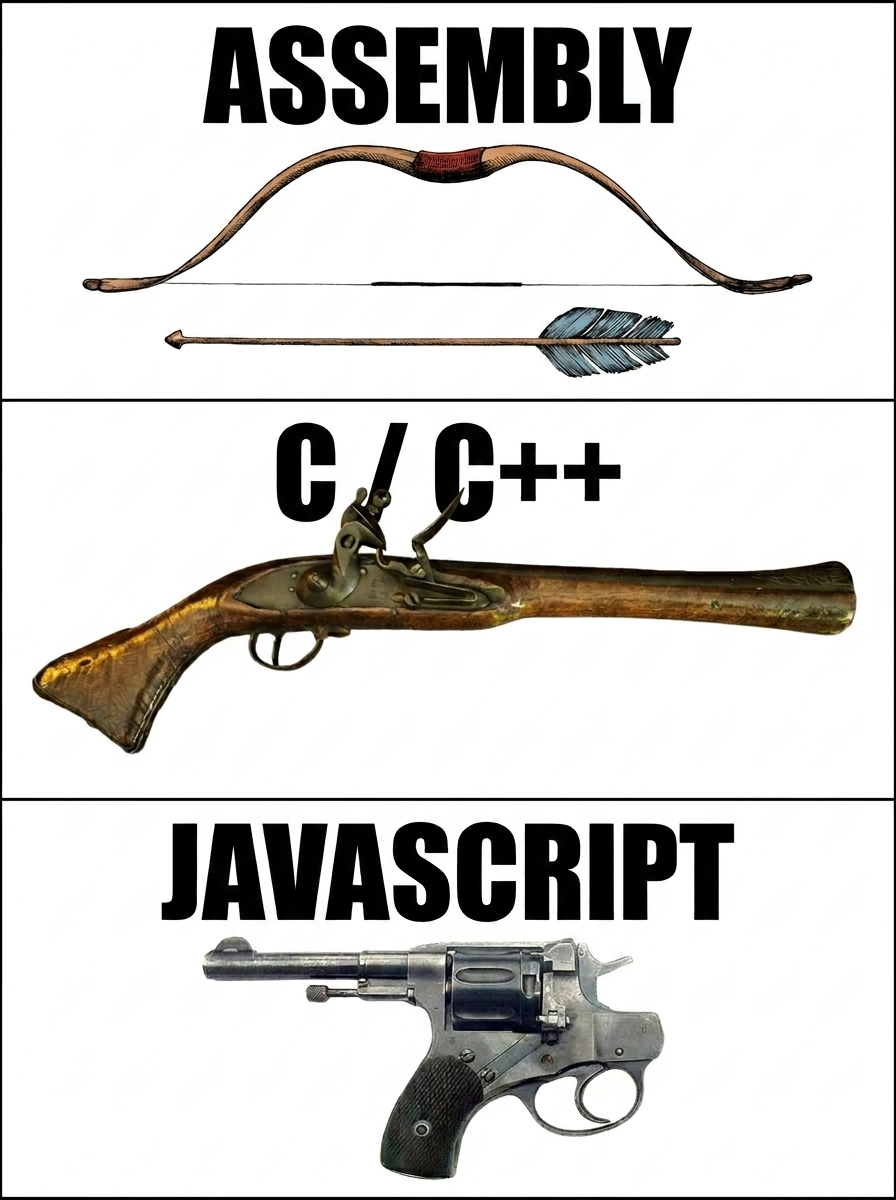

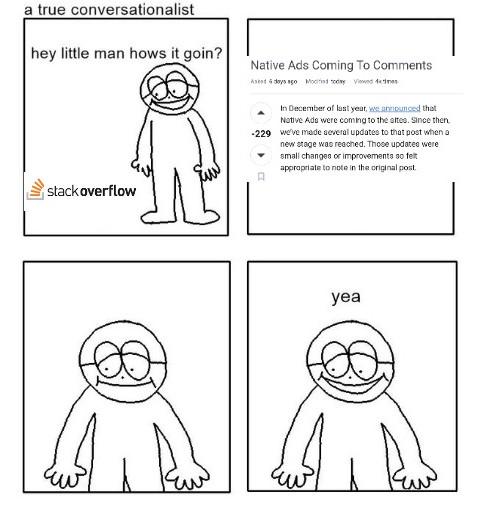

Programming Memes

Welcome to the universal language of programmer suffering! These memes capture those special moments – like when your code works but you have no idea why, or when you fix one bug and create seven more. We've all been there: midnight debugging sessions fueled by energy drinks, the joy of finding that missing semicolon after three hours, and the special bond formed with anyone who's also experienced the horror of touching legacy code. Whether you're a coding veteran or just starting out, these memes will make you feel seen in ways your non-tech friends never could.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++