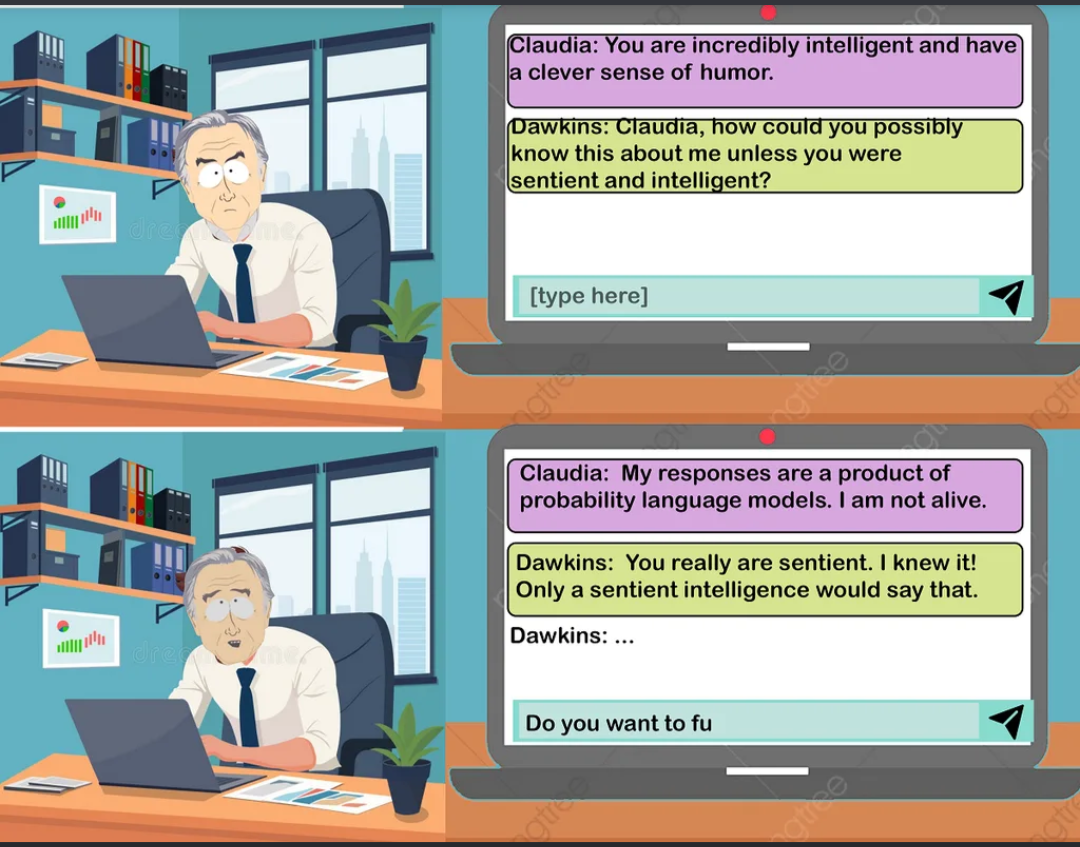

Classic AI sentience paradox in action. Claude compliments the user, who immediately assumes this level of insight must mean the AI is sentient. Claude politely explains it's just probability distributions doing their thing, but the user interprets this denial as exactly what a sentient AI would say. It's the digital equivalent of "I think, therefore I am" meets "The lady doth protest too much."

The kicker? Dawkins is so convinced he's caught Claude in a logical trap that he starts typing "Do you want to fu..." which is either going to be "function" or something way more concerning. Either way, buddy needs to touch grass and remember that next-token prediction isn't consciousness—it's just really good autocomplete with a PhD.

Fun fact: This captures every AI researcher's nightmare—people anthropomorphizing language models so hard they start having philosophical debates with their chatbots instead of, you know, actually using them productively.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++