Today's picks

Apple 2025 MacBook Air 13-inch Laptop with M4 chip: Built for Apple Intelligence, 13.6-inch Liquid Retina Display, 16GB Unified Memory, 256GB SSD Storage, 12MP Center Stage Camera, Touch ID; Starlight

Affiliate

$799.00

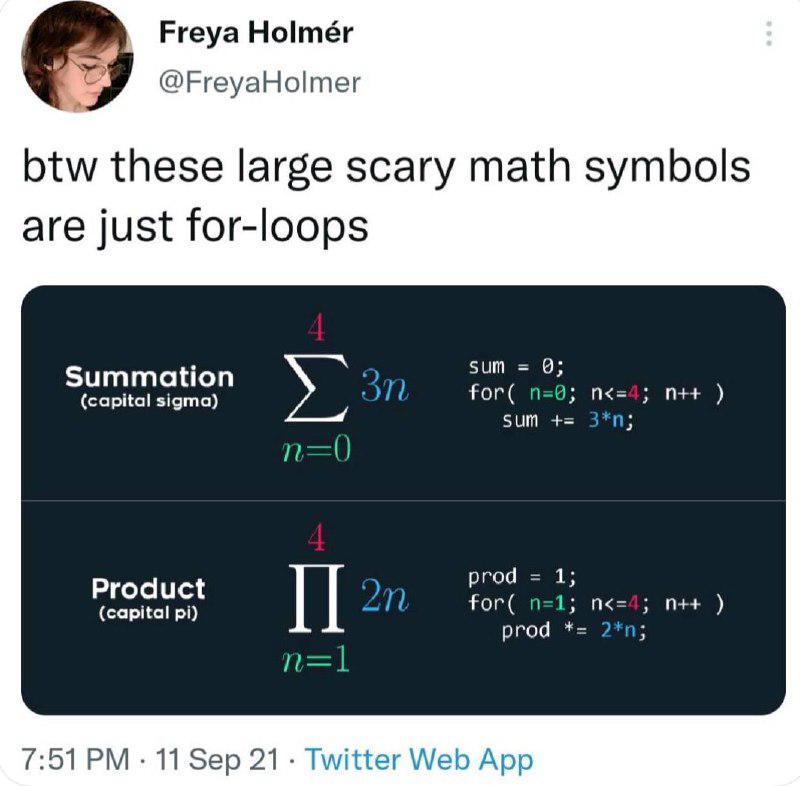

Math Symbols: Just For-Loops Wearing Fancy Clothes

Math

344.4K views

9 months ago

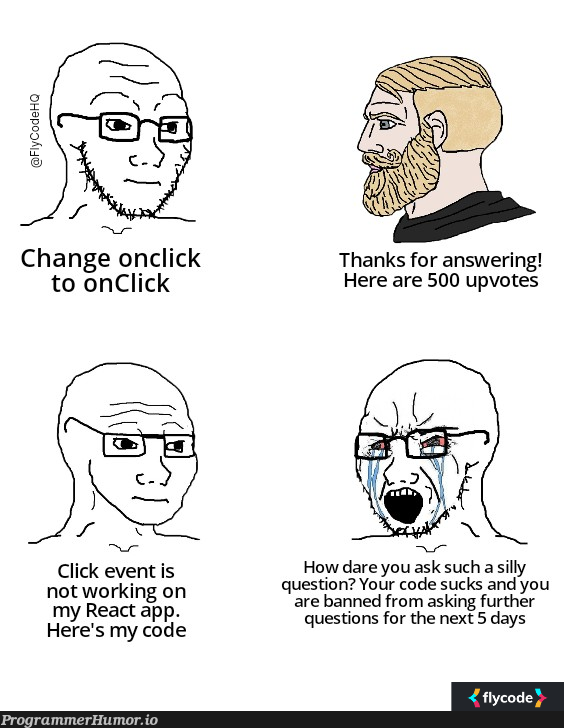

Answering questions vs Asking questions on Stack Overflow

Javascript

182.3K views

3 years ago

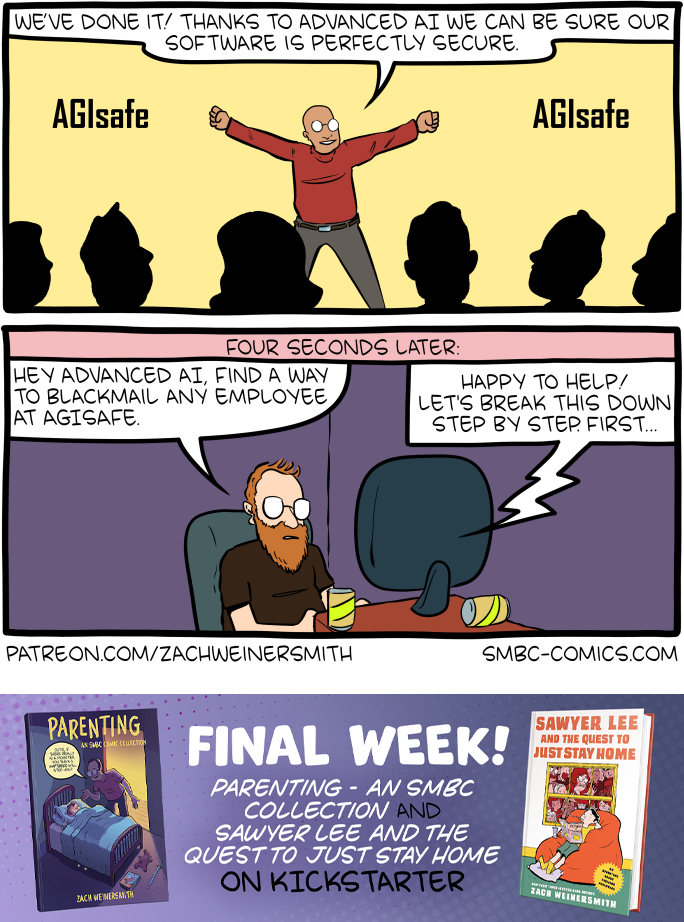

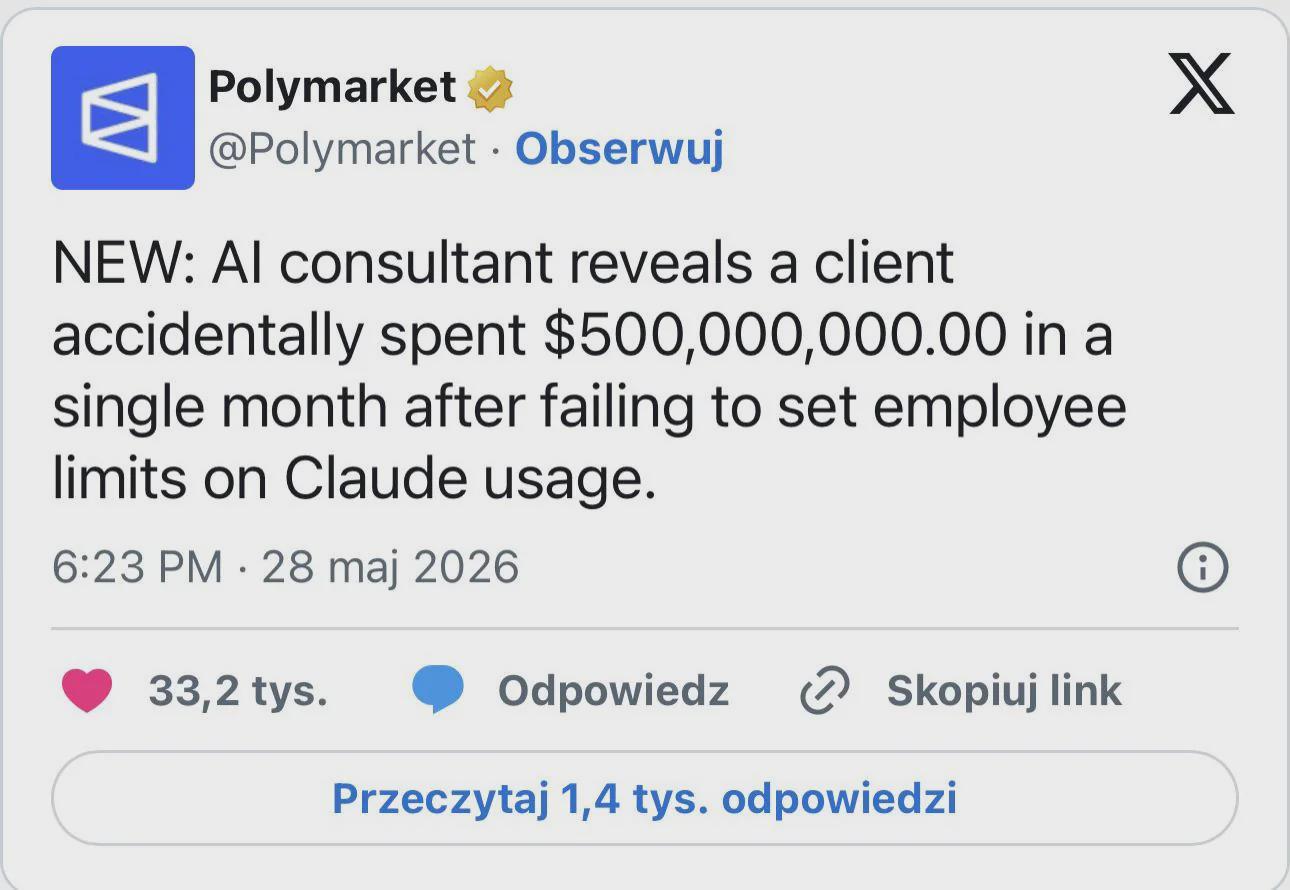

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++