Today's picks

SunFounder Ultimate Starter Kit Compatible with Arduino UNO IDE Scratch, 3 in 1 IoT/Smart Car/Basic Kit with Online Tutorials, Video Courses, 192 Items, 87 Projects, Suitable for Age 8+ Beginners

Affiliate

$59.99

GearScouts.com

Sponsored

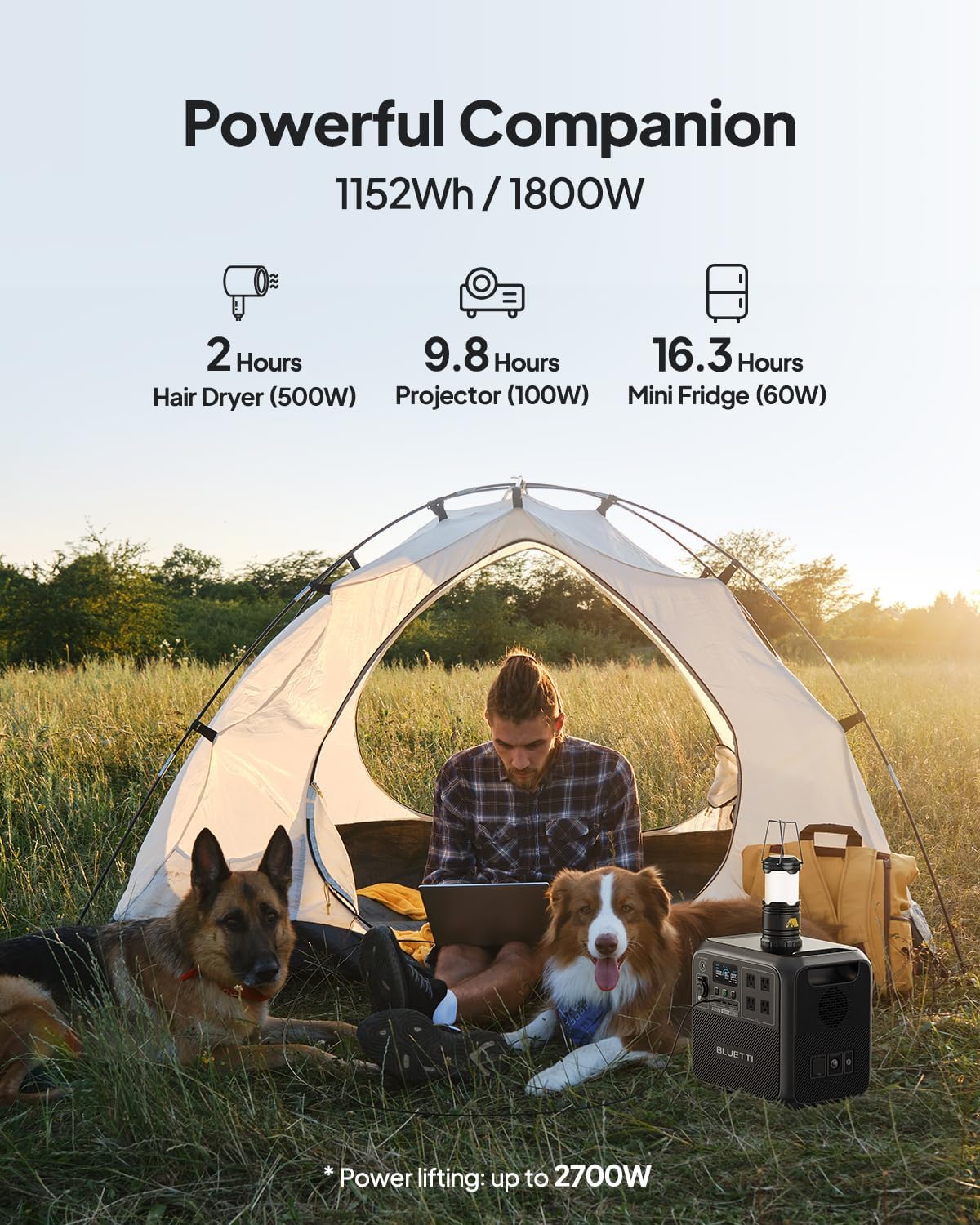

Power stations

Lemme Go Solve Some Github Issues

Linux

72.0K views

1 year ago

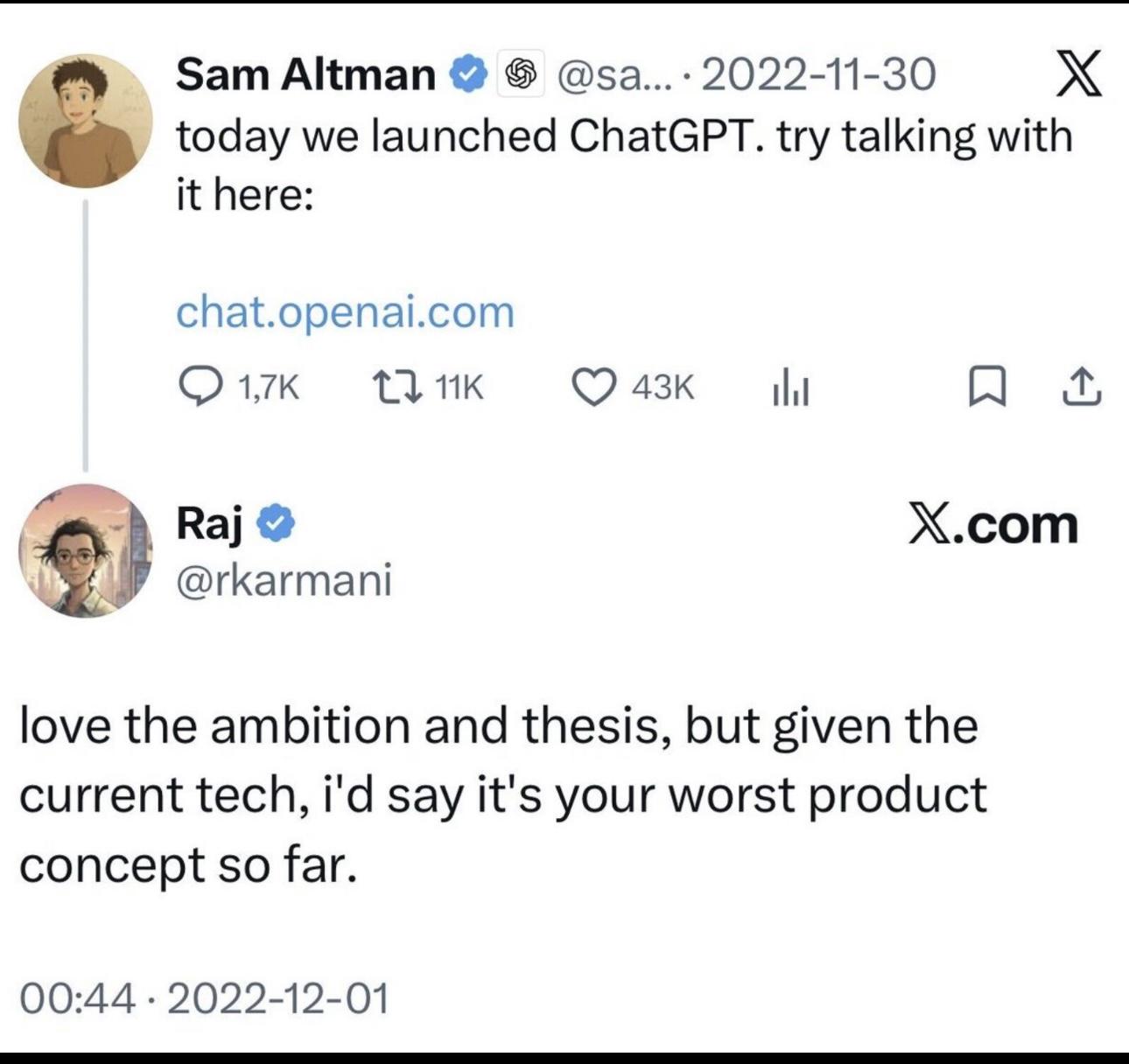

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++