Today's picks

2 (Pieces) 3" and 5" Git Gud Graffiti Tag Sticker, Waterproof Vinyl Decals for Many Purpose Like Cars, Trucks, Laptops, Phones, Windows and More

Affiliate

$4.50

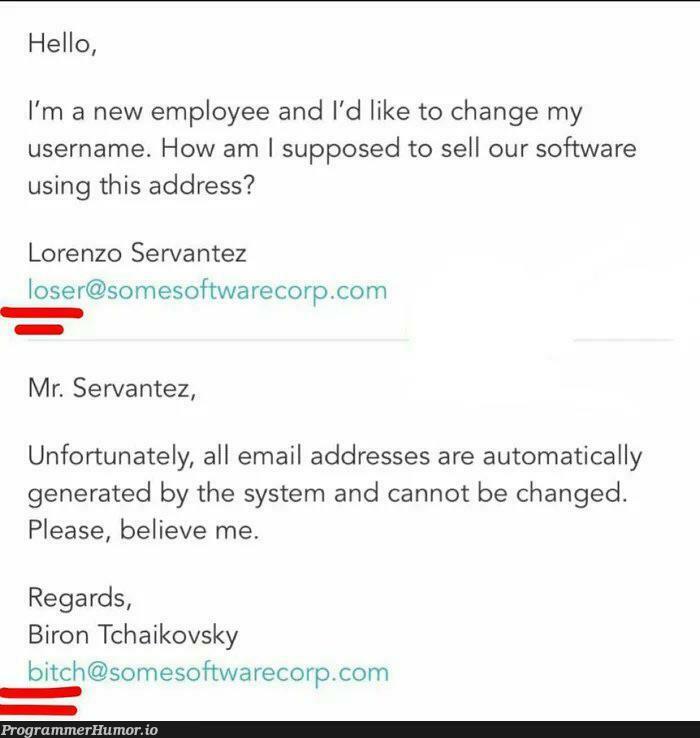

My boss send me a meme and i need to share it!

Databases

86.6K views

2 years ago

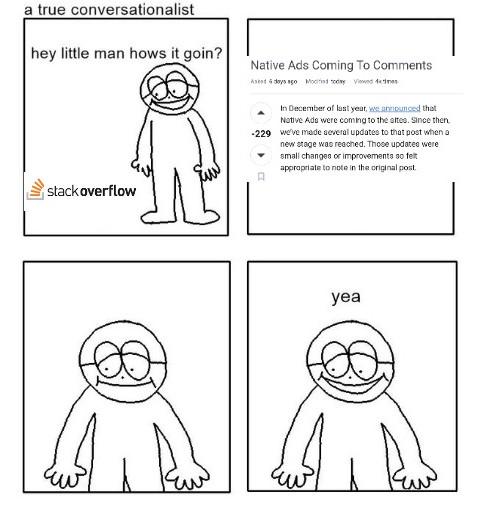

First comic try. What do you think?

Programming

83.2K views

4 years ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++