Today's picks

MOSISO Compatible with MacBook Pro 16 inch Case 2025 2024 2023 2022 2021 M4 M3 M2 M1 A3403 A3186 A2991 A2780 A2485,Anti-Cracking Heavy Duty TPU Bumper Hard Case&Keyboard Skin&Screen Film, Black

Affiliate

$22.99

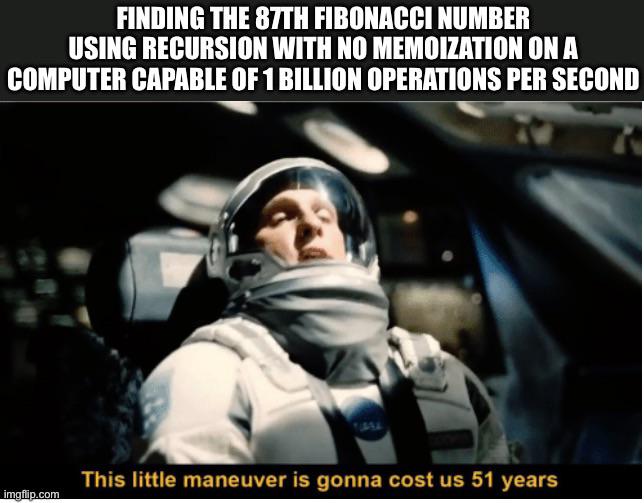

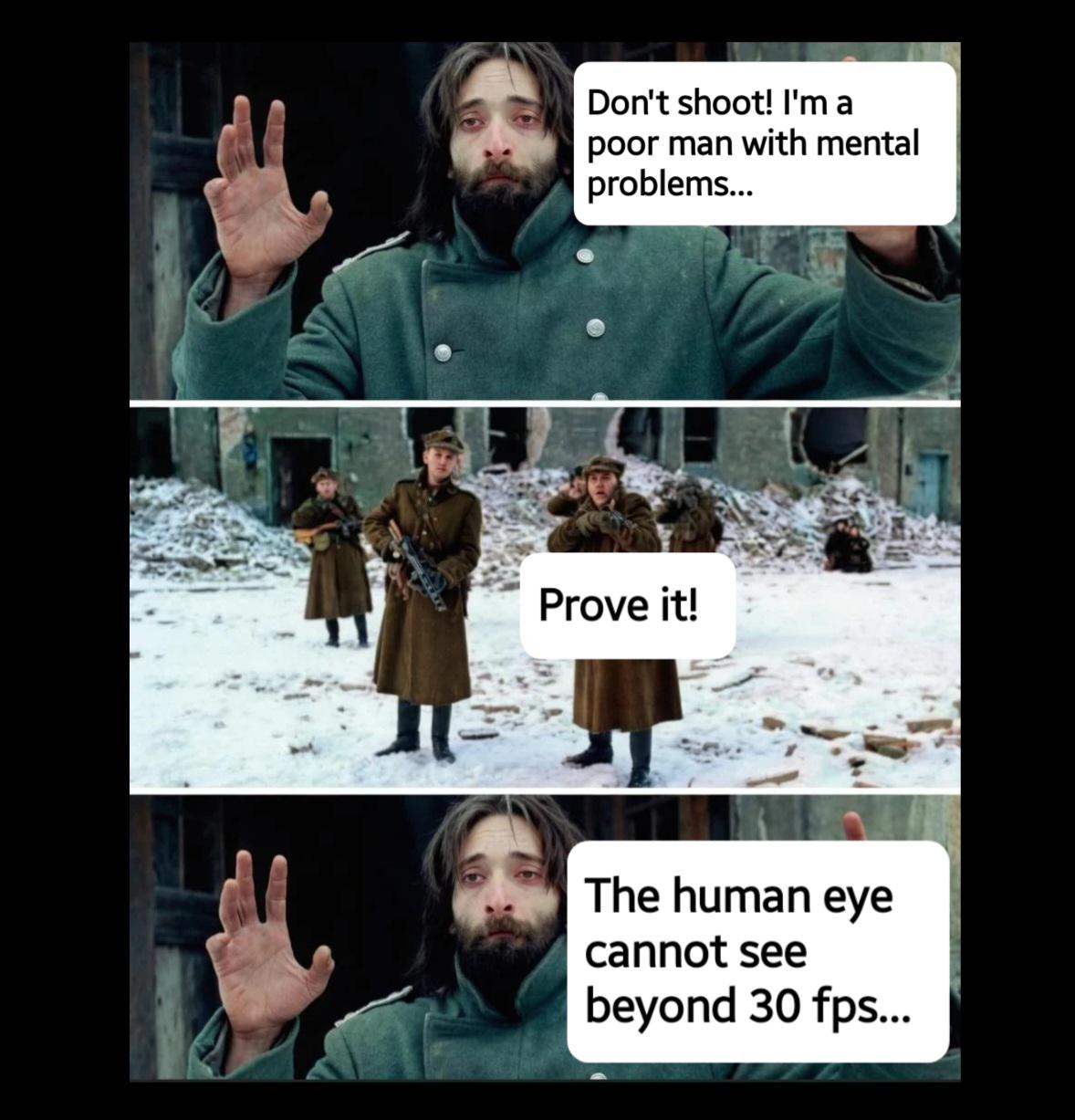

Learning ASM be like

Programming

72.0K views

1 year ago

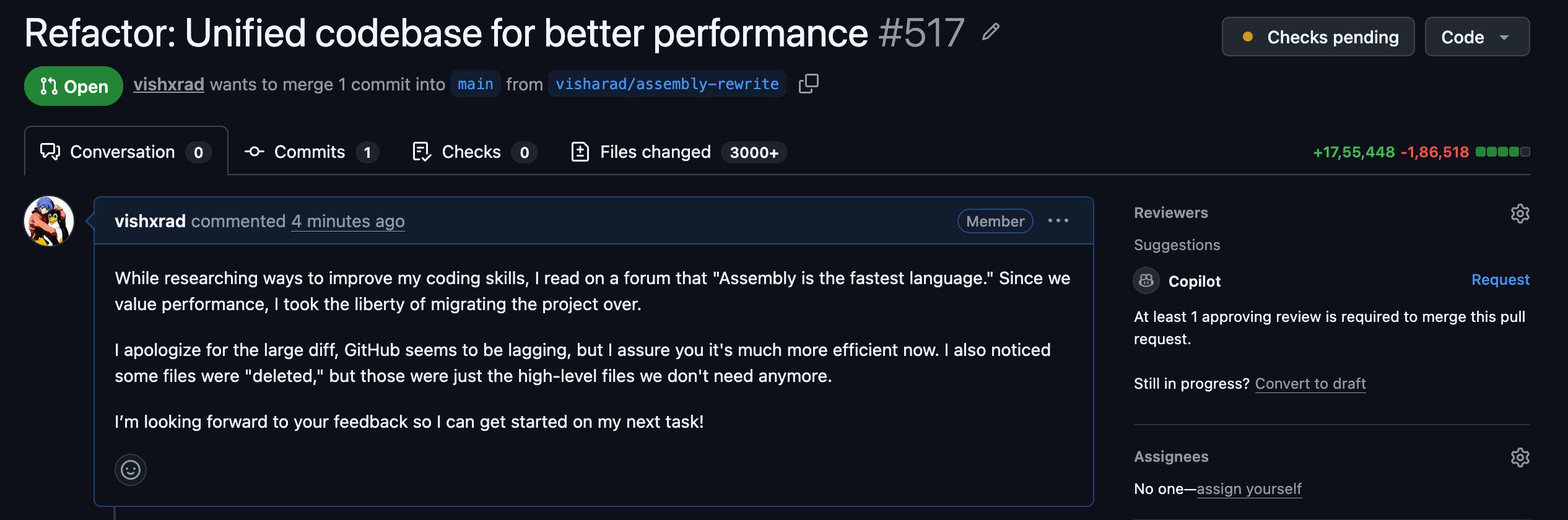

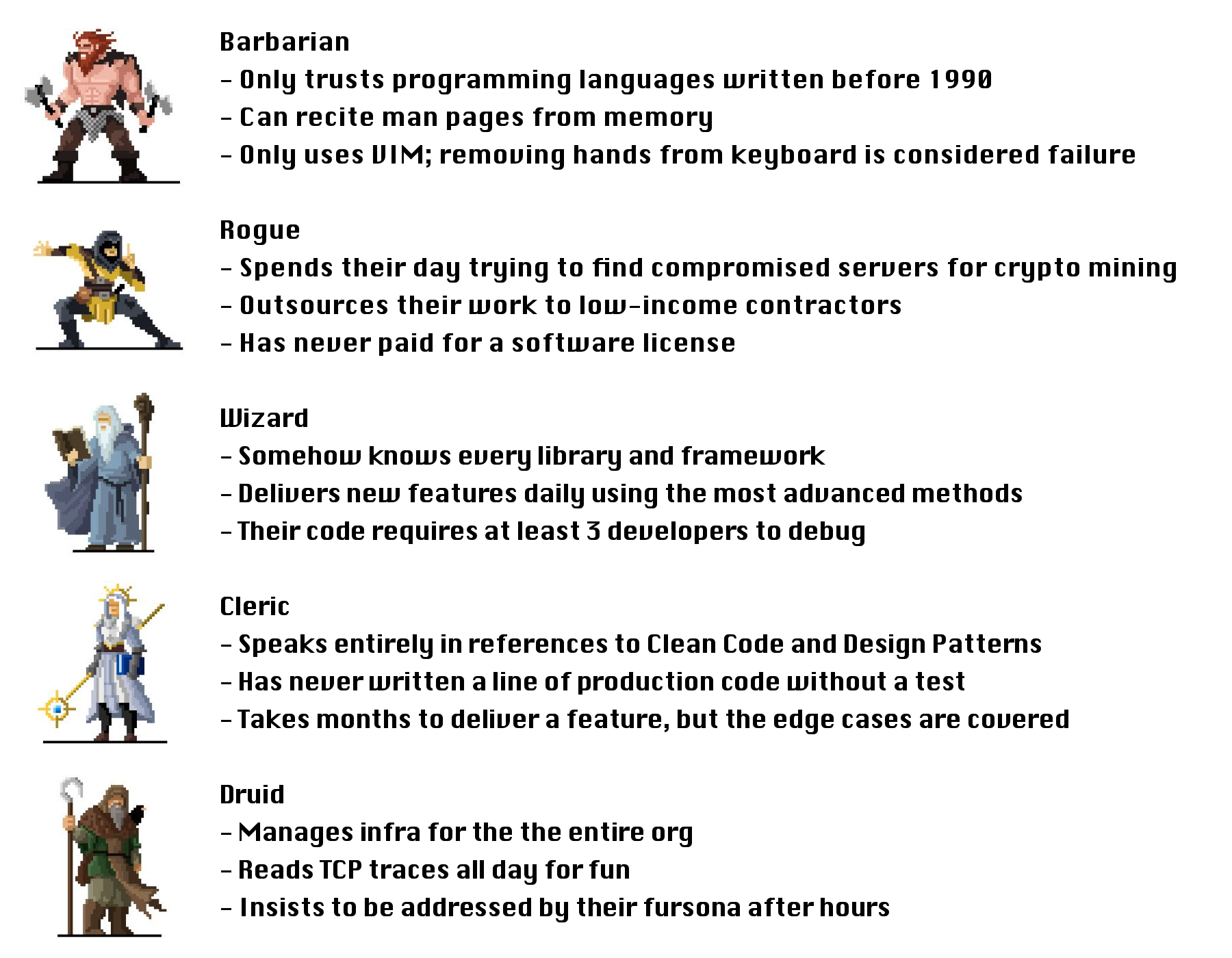

If Devs Were D&D Classes

Programming

439.4K views

1 year ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++