Today's picks

MEETION Ergonomic Mouse, Wireless Vertical Mouse RGB Backlit Rechargeable Mice for Bluetooth(5.2 + 3.0) & USB-A with USB-C Adapter 4 Adjustable DPI for Mac/Windows/Andriod/PC/Tablet/iPad Black

Affiliate

$15.58

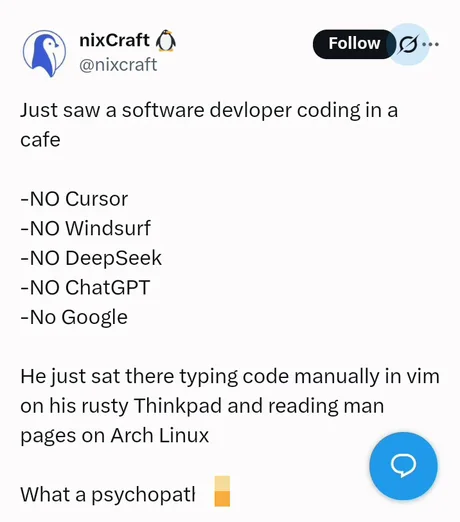

The Last Vim Samurai

Linux

699.6K views

11 months ago

GearScouts.com

Sponsored

Power stations

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++