Today's picks

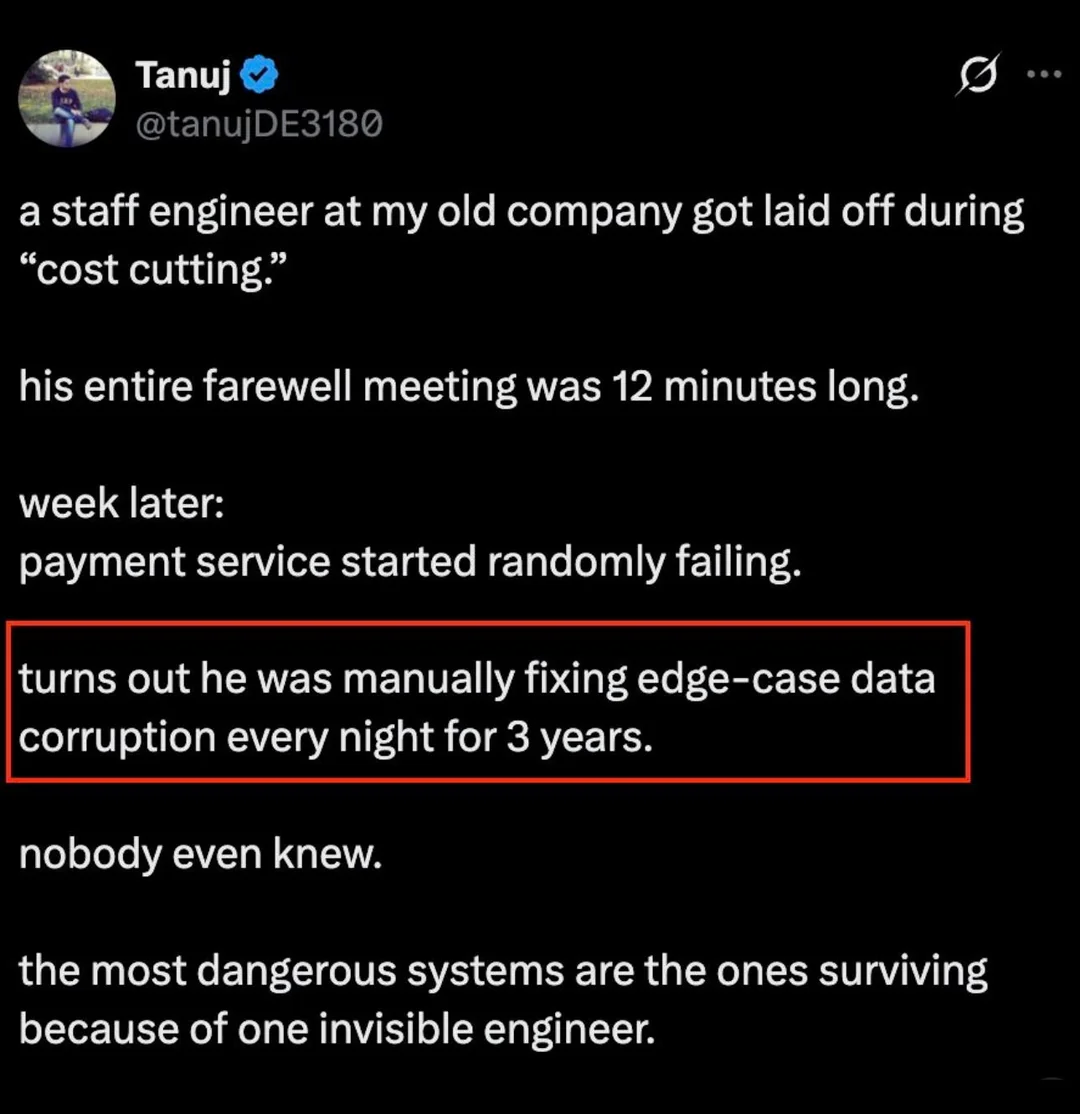

Only Option Remaining

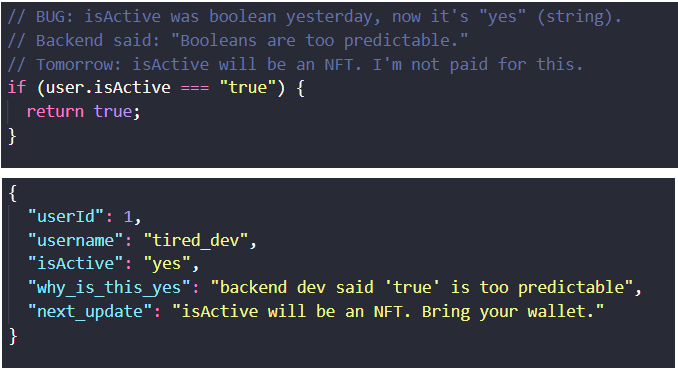

Programming

19.3M views

4 days ago

CUNPU 27 Inch 4K 70Hz Monitor, UHD (3840 * 2160) IPS Ultra-Slim Bezel Monitor for Photo Video Editing, ΔE < 2, 100% DCI-P3, 1.07B+ Colors, PIP-PBP, Adaptive Sync, DP/HDMI, Black

Affiliate

$161.99

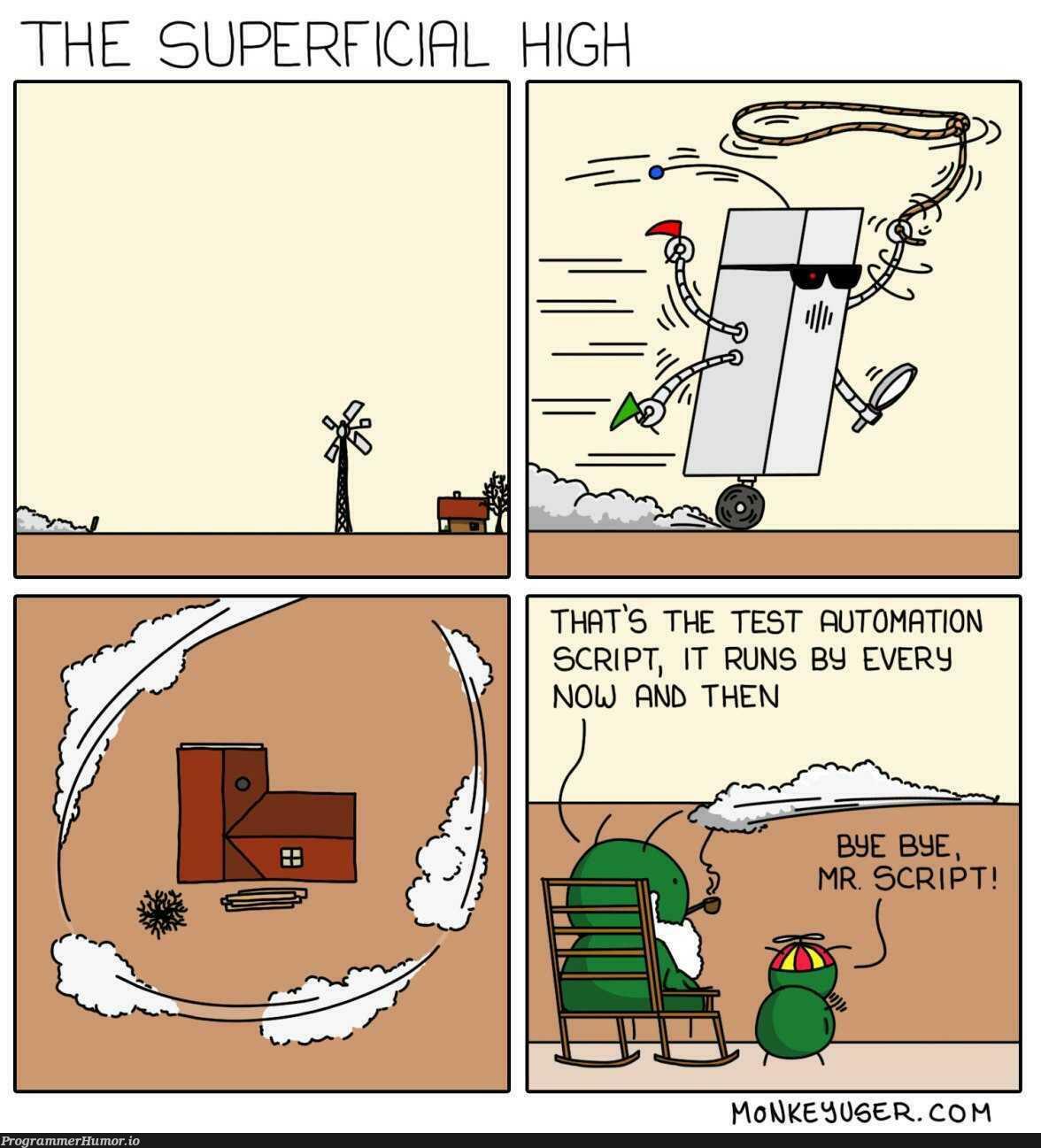

Good bye Mr. Script

Testing

35.0K views

3 years ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++