Today's picks

Maybe Maybe Not

Programming

22.3M views

22 hours ago

Array indexing, round 3

Programming

71.3K views

4 years ago

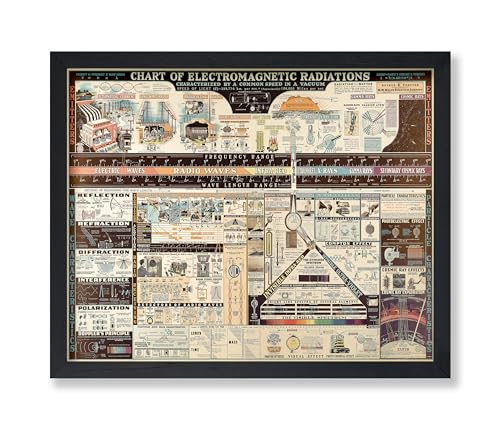

Poster Master Vintage Science Poster - Chart of Electromagnetic Radiations Print - Electric Waves Art - Gift for Teacher, Student - Great Decor for Classroom, Library, Dorm - 8x10 UNFRAMED Wall Art

Affiliate

$13.95

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++