Today's picks

Maybe Maybe Not

Programming

22.6M views

23 hours ago

Visual Studio 2017

Debugging

63.4K views

2 years ago

EZTOOLS 926LED V3 Entry-Level 60W Soldering Station Iron Kit in Black with Temperature Control includes Helping Hands, Lead-Free Solder, 6 Soldering Tips, ESD-Safe Tweezers, Sleep Mode

Affiliate

$34.99

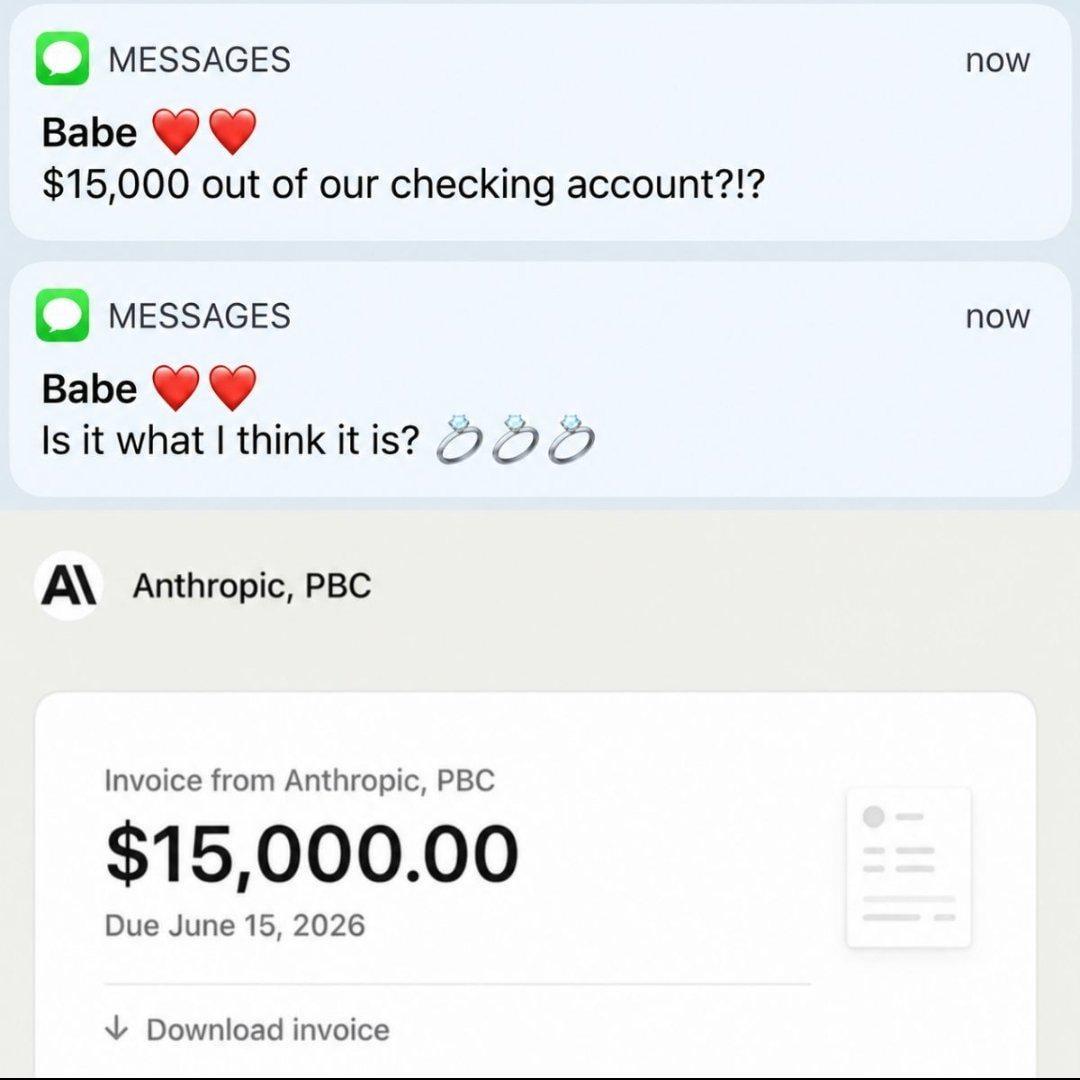

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++