Today's picks

gianotter Computer Monitor Stand Riser, Desk Organizers and Accessories with Drawer, Office Desk Accessories & Workspace Desktop Organizers Storage for Printer Stand (Black)

Affiliate

$18.99

They Downgraded To 64

Hardware

1.7M views

1 day ago

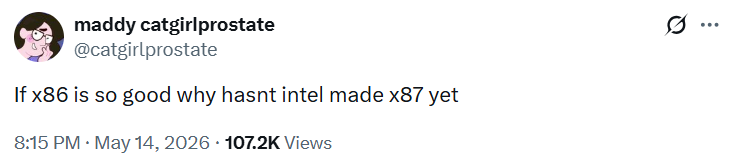

Discord recruiting from the console

Javascript

80.9K views

3 years ago

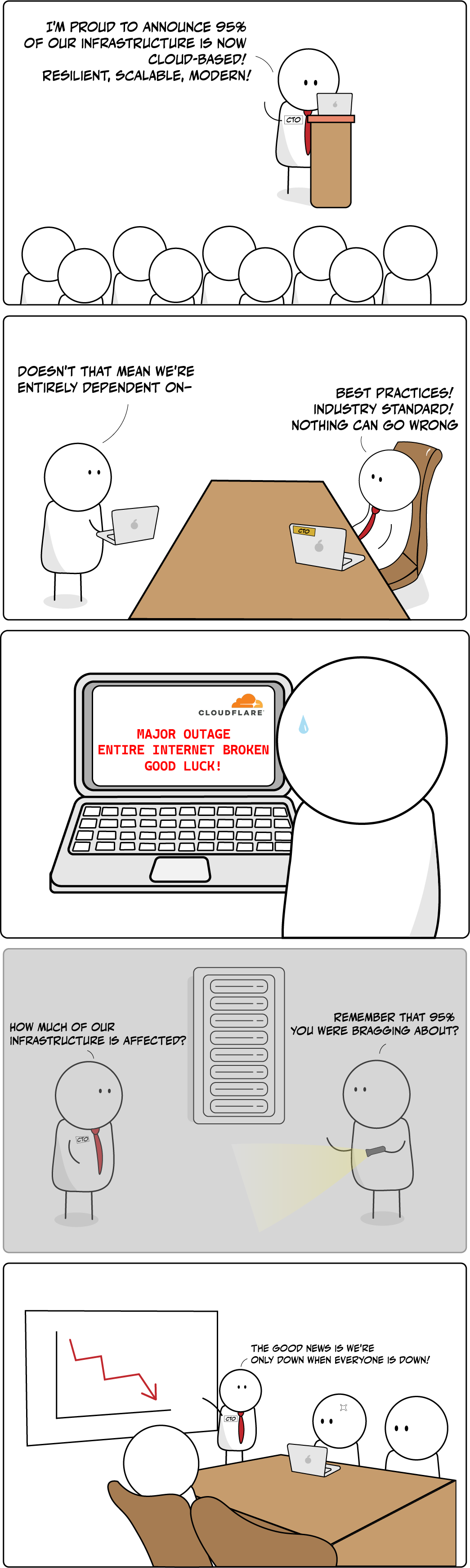

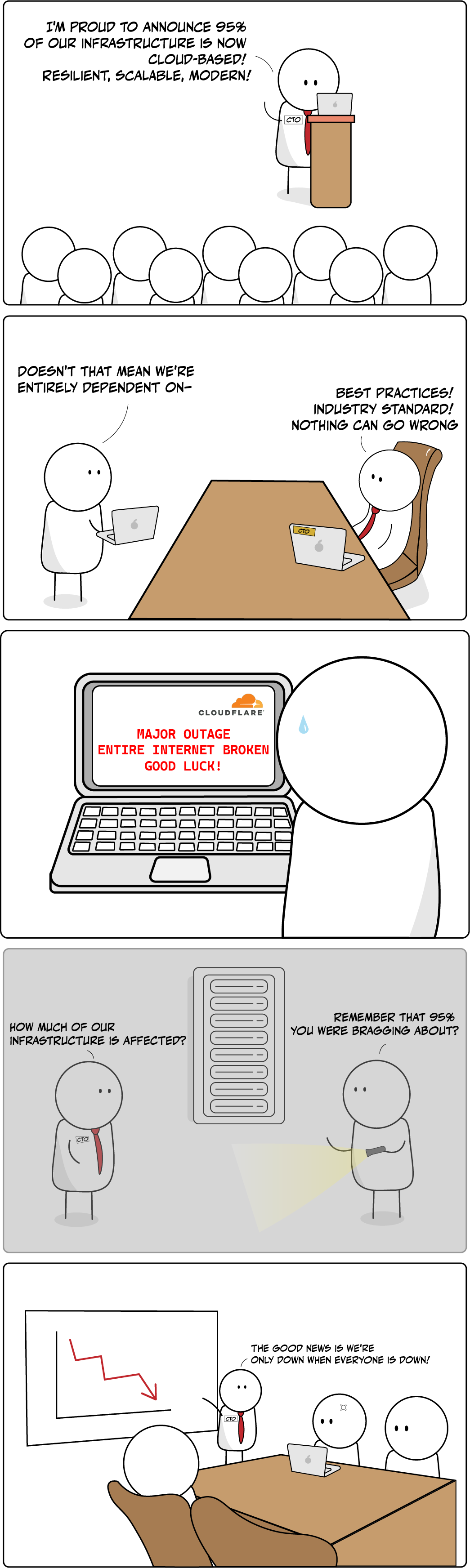

AI

AI

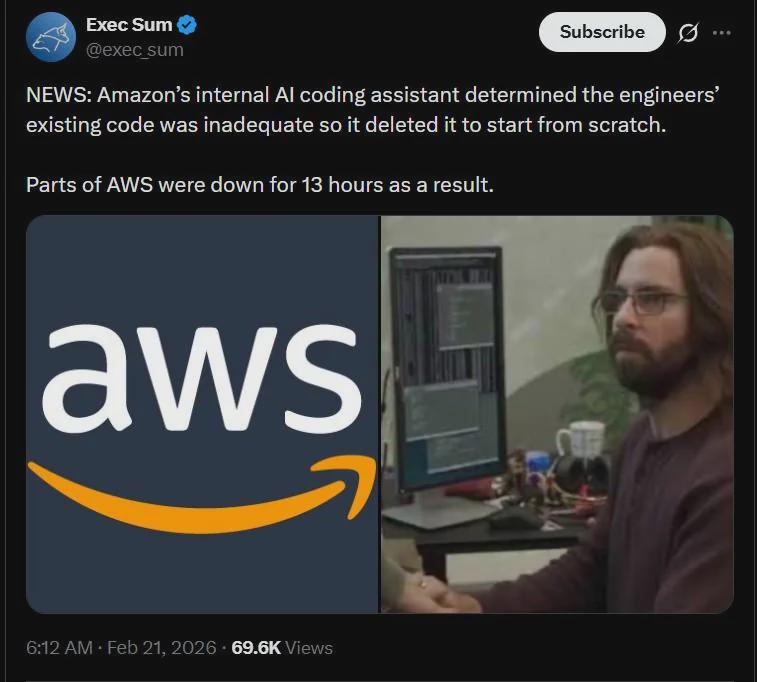

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp