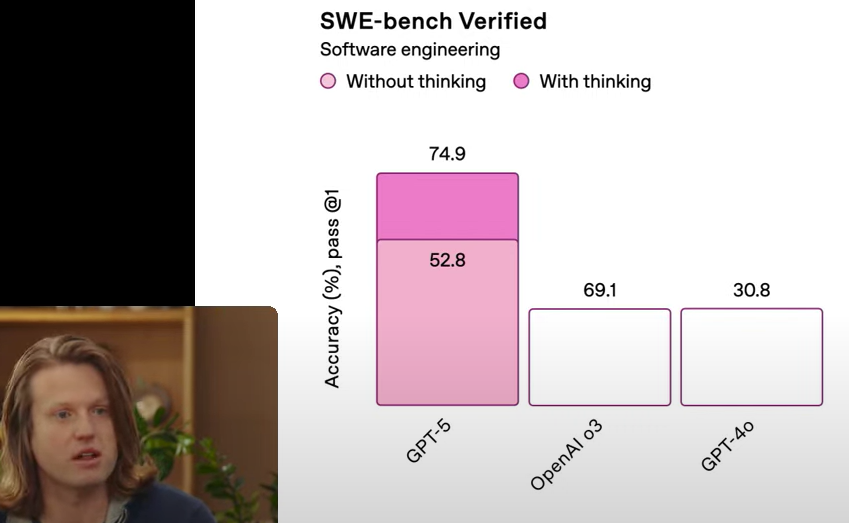

The chart hilariously reveals that GPT-5 scores a whopping 74.9% accuracy on software engineering benchmarks, but the pink bars tell the real story – 52.8% of that is achieved "without thinking" while only a tiny sliver comes from actual "thinking." Meanwhile, OpenAI's o3 and GPT-4o trail behind with 69.1% and 30.8% respectively, with apparently zero thinking involved. It's basically saying these AI models are just regurgitating patterns rather than performing actual reasoning. The perfect metaphor for when your code works but you have absolutely no idea why.

SWE-Bench Verified: Thinking Optional

9 months ago

501,772 views

1 shares

ai-memes, machine-learning-memes, gpt-memes, benchmarks-memes, software-engineering-memes | ProgrammerHumor.io

More Like This

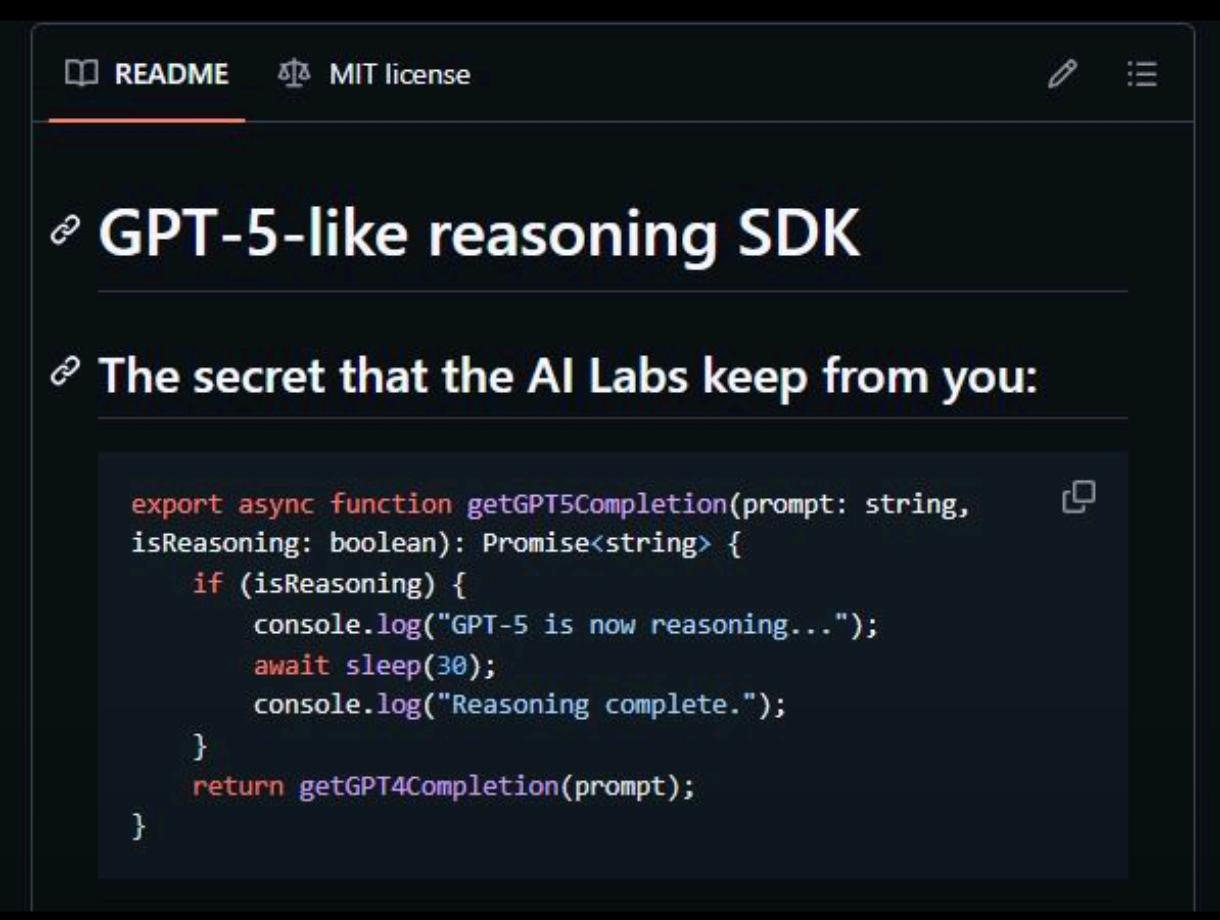

The Only Right Way To Implement AI Reasoning

8 months ago

417.3K views

1 shares

We Don't Want Your Data

23 days ago

573.5K views

1 shares

Perfect Reddit Screen

5 months ago

429.8K views

0 shares

Leyland Designs Gg - Git Gud (Black) Bumper Sticker Window Water Bottle Decal 5""

Affiliate

Stickers

Leyland Designs

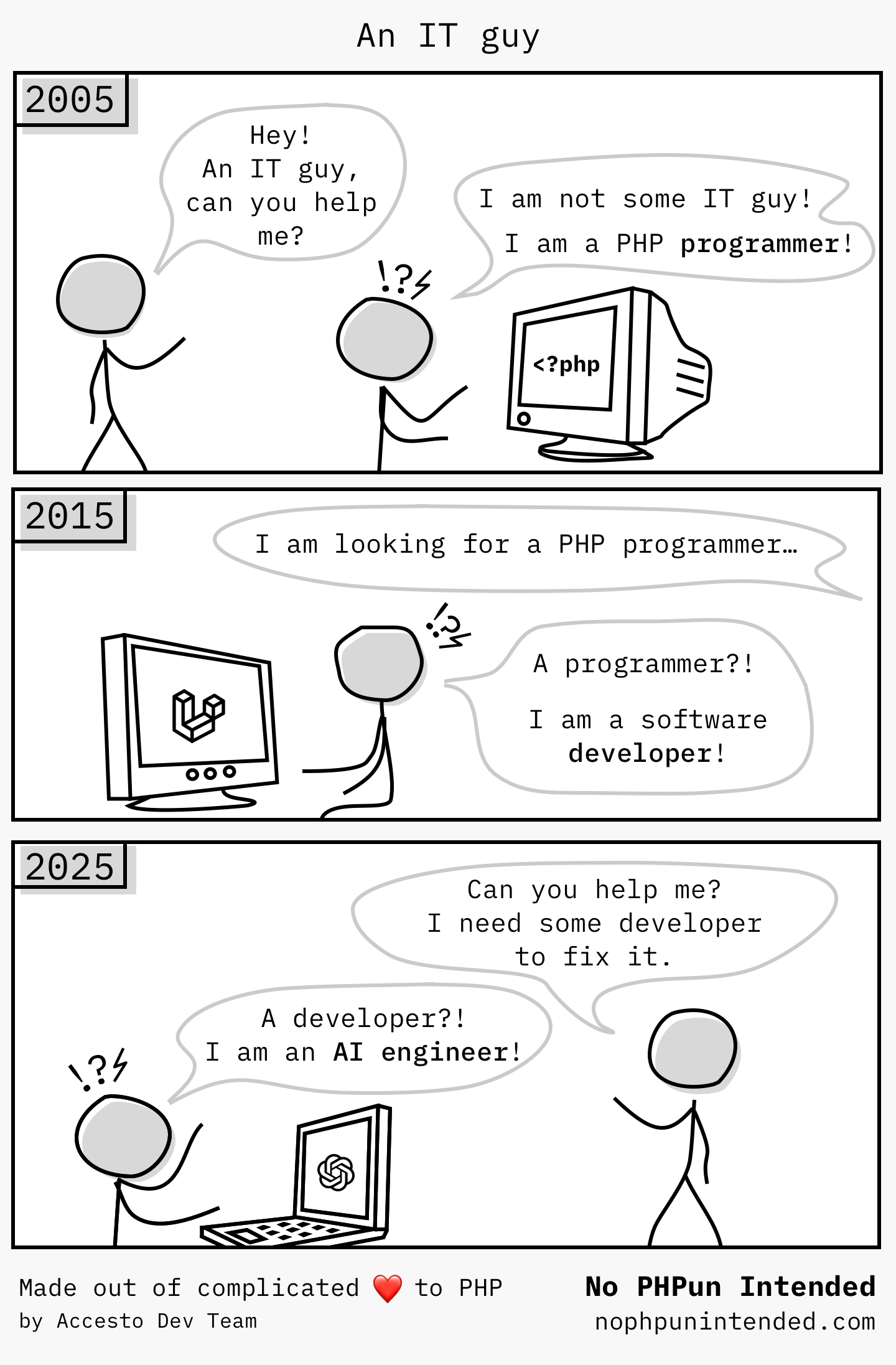

The Great Tech Title Inflation

6 months ago

345.5K views

0 shares

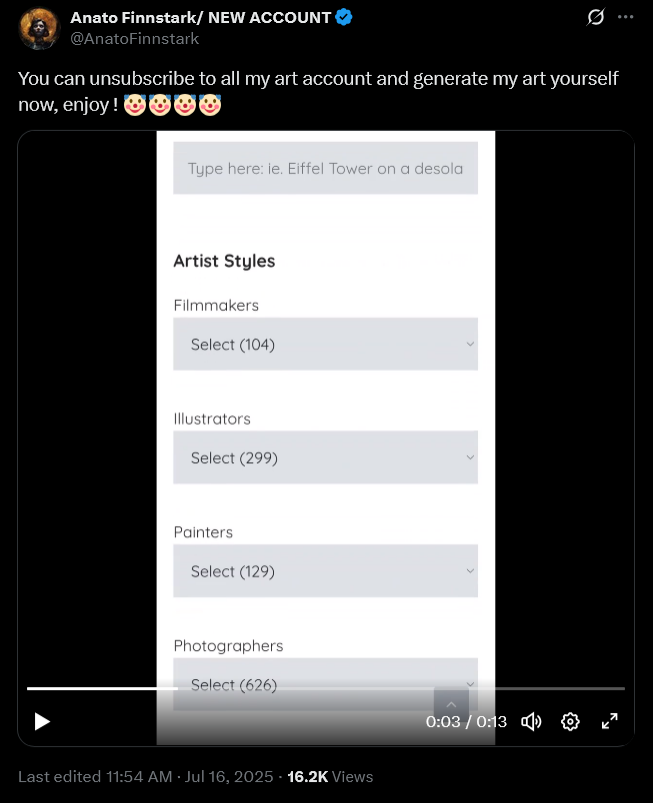

Select * From Art Where Creativity = Null

10 months ago

351.6K views

0 shares

Loading more content...

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp