Today's picks

Logitech K400 Plus Wireless Touch TV Keyboard with Easy Media Control and Built-in Touchpad, HTPC Keyboard for PC-Connected TV, Windows, Android, ChromeOS, Laptop, Tablet - Black

Affiliate

$24.99

Politics Have Gone Too Far

Javascript

164.6K views

2 years ago

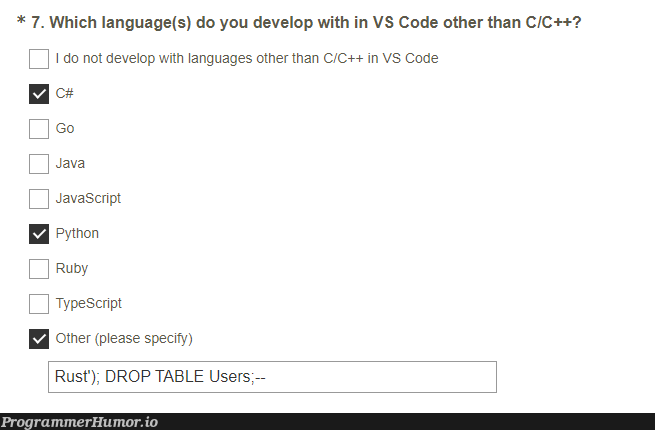

Let's see if they sanitise their data

Javascript

169.6K views

2 years ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++