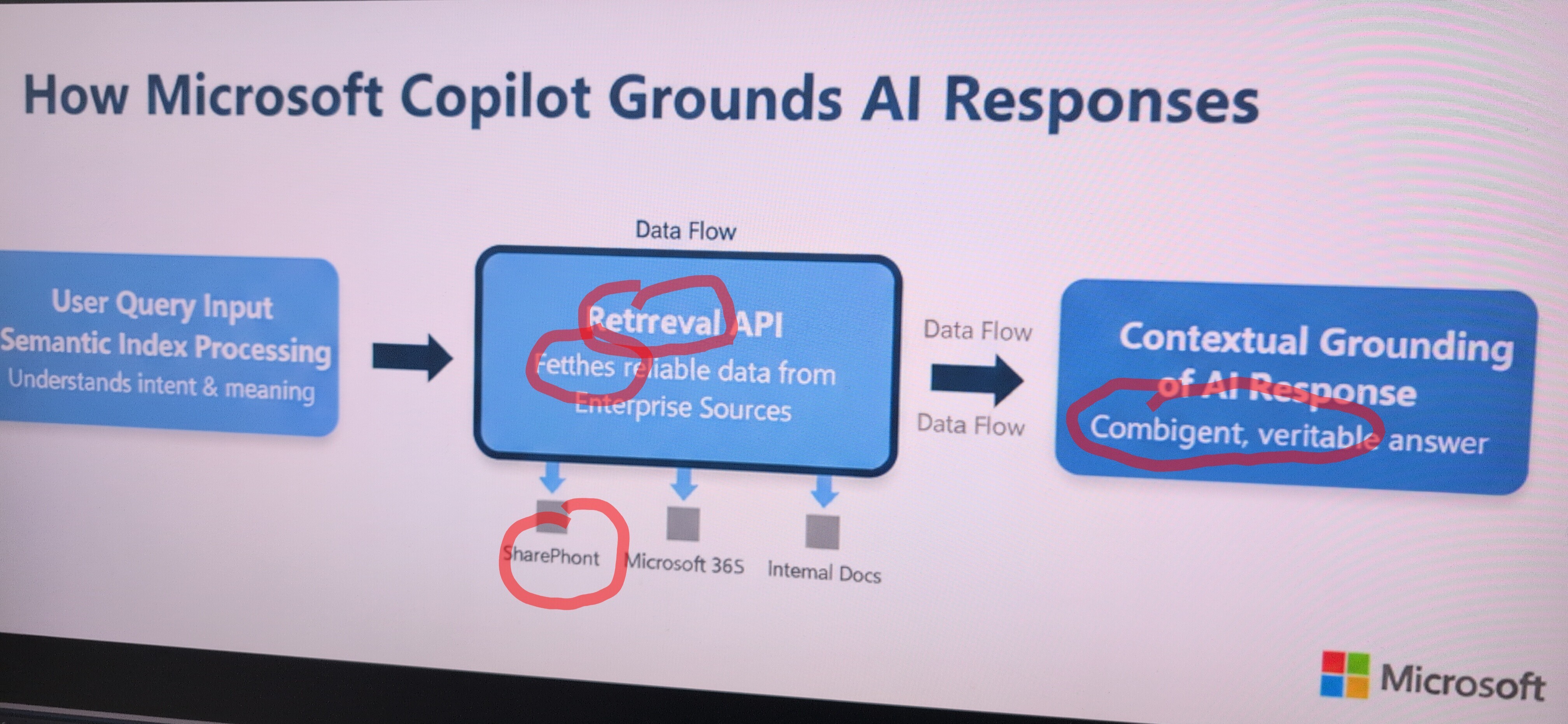

Someone at Microsoft gave a presentation on Copilot's RAG architecture and apparently couldn't resist the urge to doodle all over the slide like a caffeinated toddler with a red marker. The diagram shows how Copilot supposedly grounds AI responses using retrieval from enterprise sources (SharePoint, Microsoft 365, Internal Docs), but those aggressive red circles screaming "Retrieval API," "SharePoint," and "Combigent, veritable" (yes, combigent) make it look less like professional documentation and more like a crime scene investigation board.

The irony is palpable: you're trying to explain how your AI produces "verifiable" answers while simultaneously circling random words like you're not entirely sure what they mean yourself. Nothing says "enterprise-grade AI solution" quite like documentation that looks like it was annotated during a panic attack. Also, "combigent" isn't even a word—maybe the AI wrote this slide too and nobody bothered to ground that response.

Fun fact: In RAG (Retrieval-Augmented Generation), "grounding" means anchoring AI responses to actual retrieved data instead of letting the model hallucinate. But when your documentation itself looks hallucinated, we've got bigger problems.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp