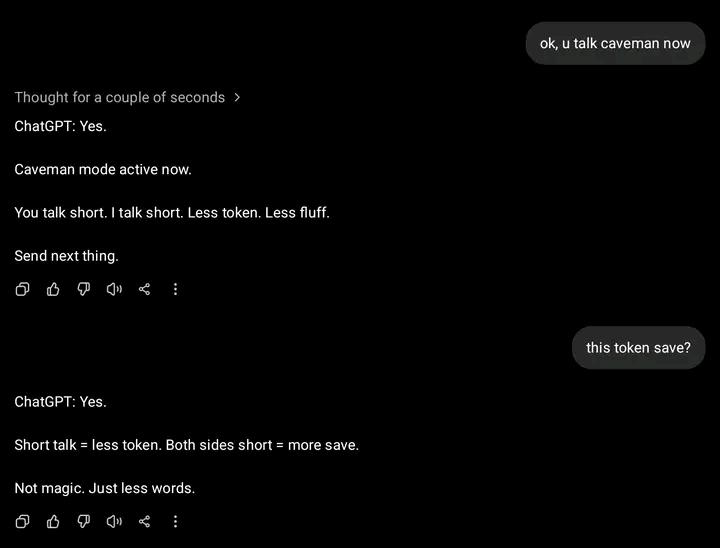

Someone discovered ChatGPT's "caveman mode" and thought they'd found a life hack to save tokens. The logic: shorter prompts = fewer tokens = more money saved. ChatGPT, ever the patient AI therapist, had to gently explain that tokens aren't charged by conversation length, they're charged by word count. Both sides being concise just means fewer words total, not some magical token-saving loophole.

It's like thinking you'll save on electricity by typing faster. The misunderstanding of how API pricing works is chef's kiss. Not magic. Just less words.

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++