Today's picks

BONTEC Dual Monitor Desk Mount, Full Motion Adjustable Monitor Stand for 13–27 Inch Screens, Heavy Duty Arms Hold Up to 22 lbs Each, VESA 75x75/100x100 mm, C Clamp and Grommet Base, Cable Management

Affiliate

$26.99

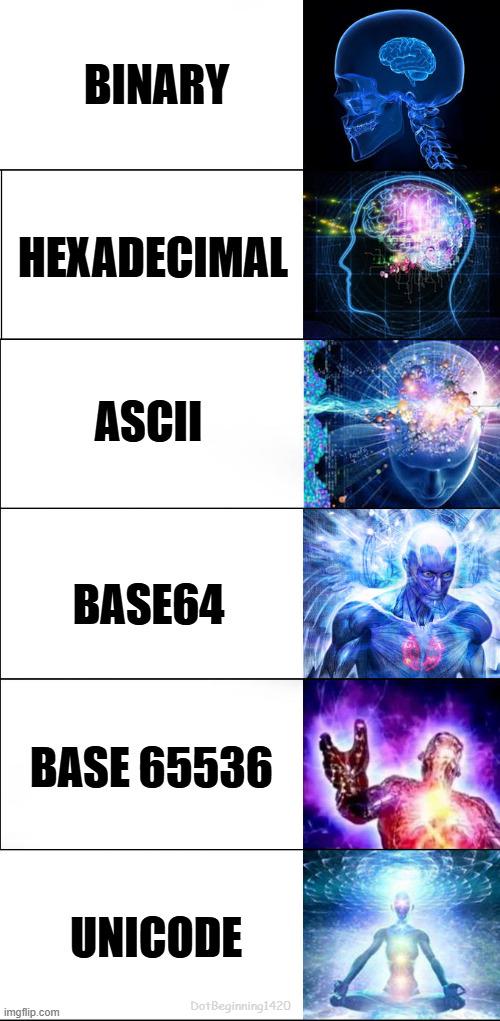

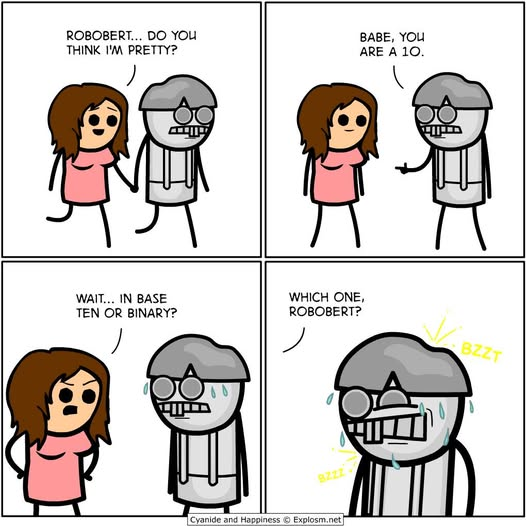

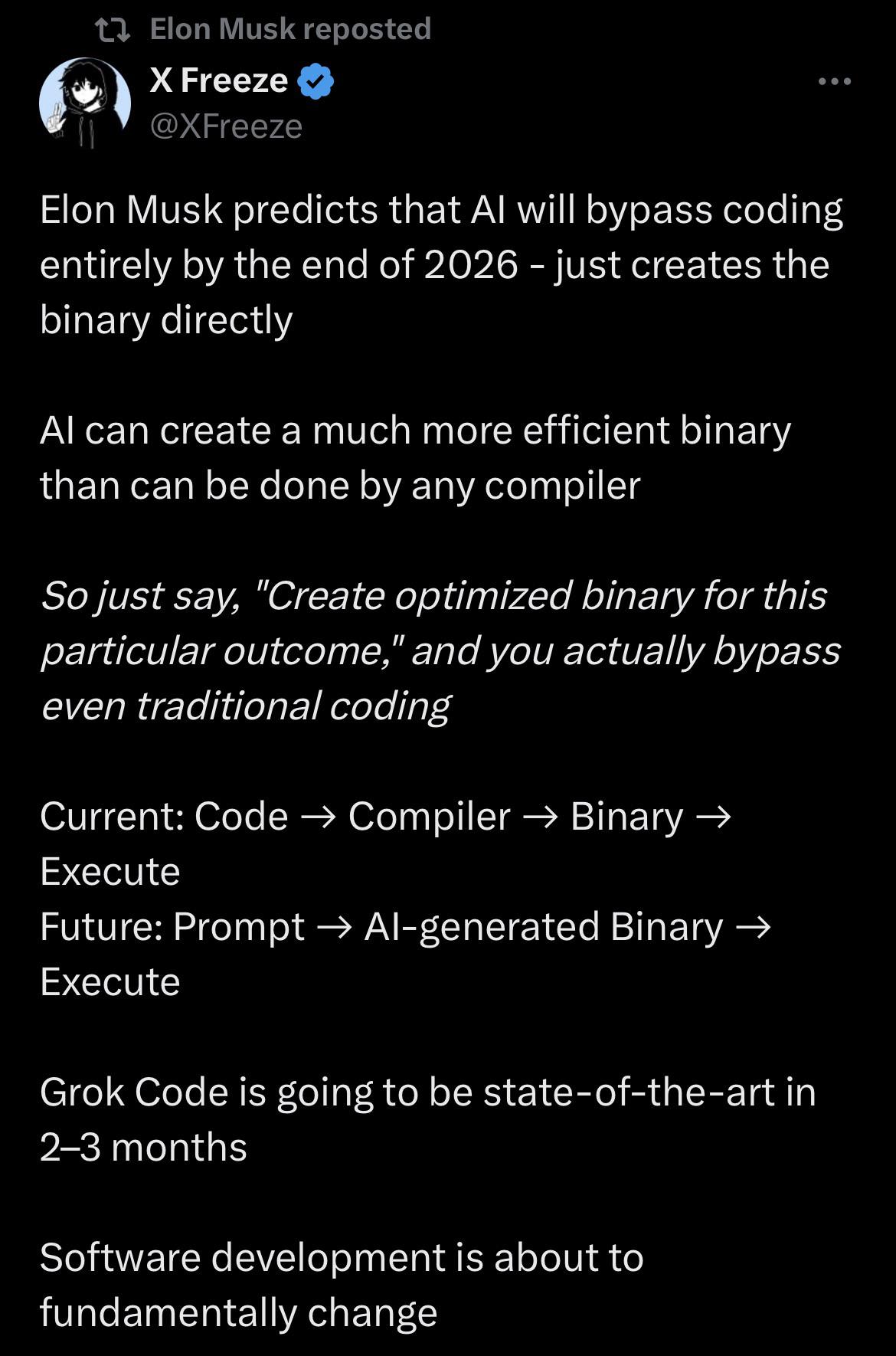

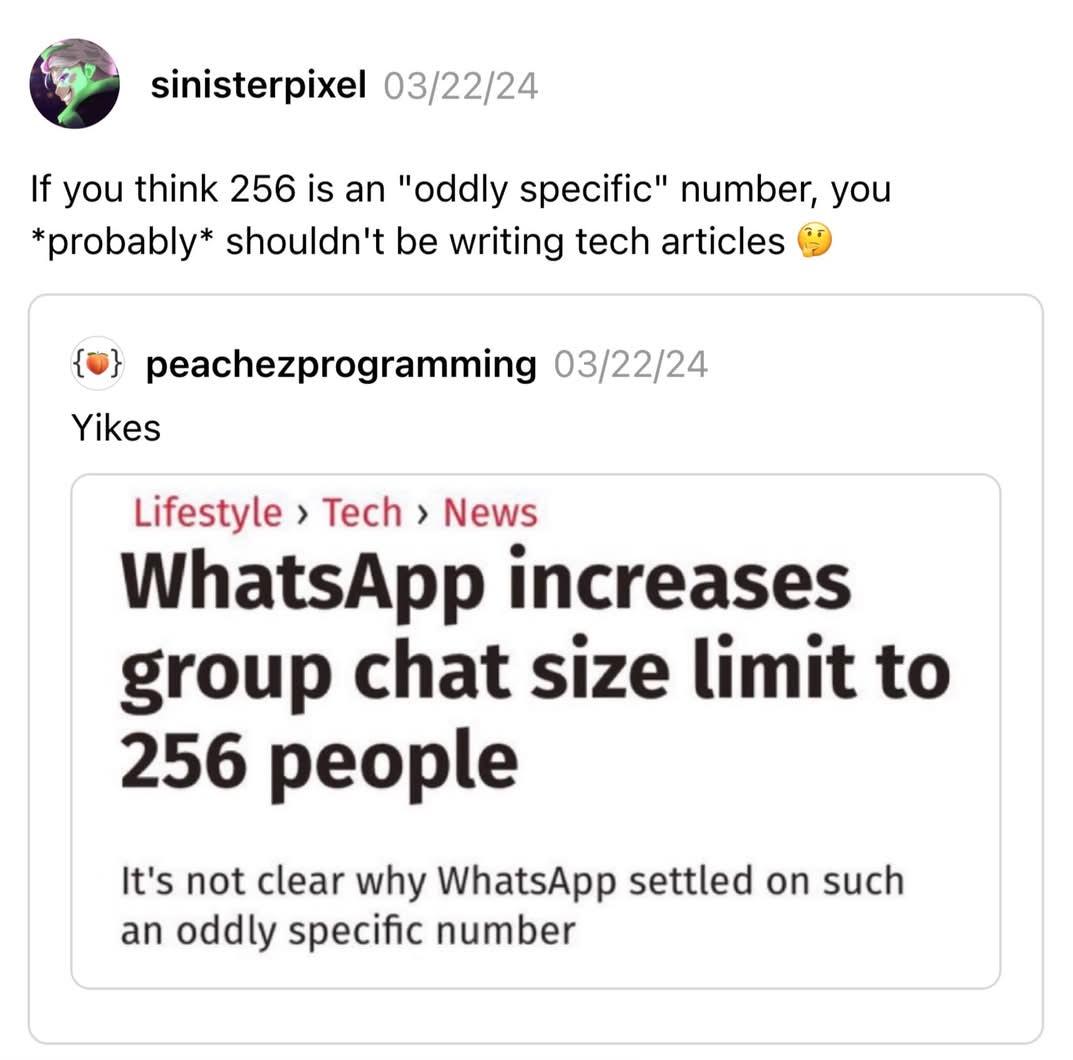

Elon just got too inspired by meme like this one when requested to switch off microservices

Programming

83.9K views

1 year ago

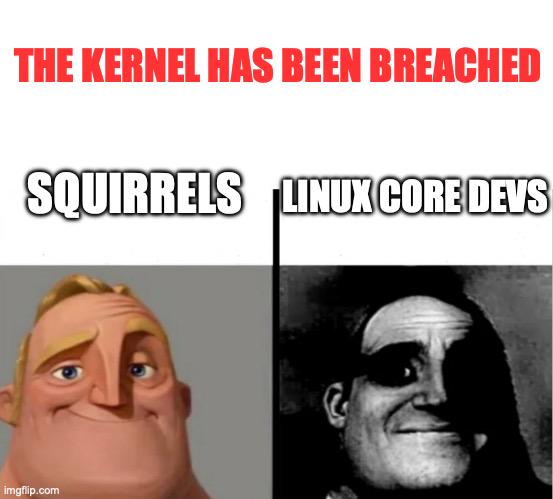

The Kernel Has Been Breached

Linux

353.7K views

1 year ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp