Today's picks

Dell 27 Plus 4K Monitor - S2725QS - 27-inch 4K (3840 x 2160) 120Hz 16:9 Display, IPS Panel, AMD FreeSync Premium, sRGB 99%, Integrated Speakers, 1500:1 Contrast Ratio, Comfortview Plus - Ash White

Affiliate

$279.00

GearScouts.com

Sponsored

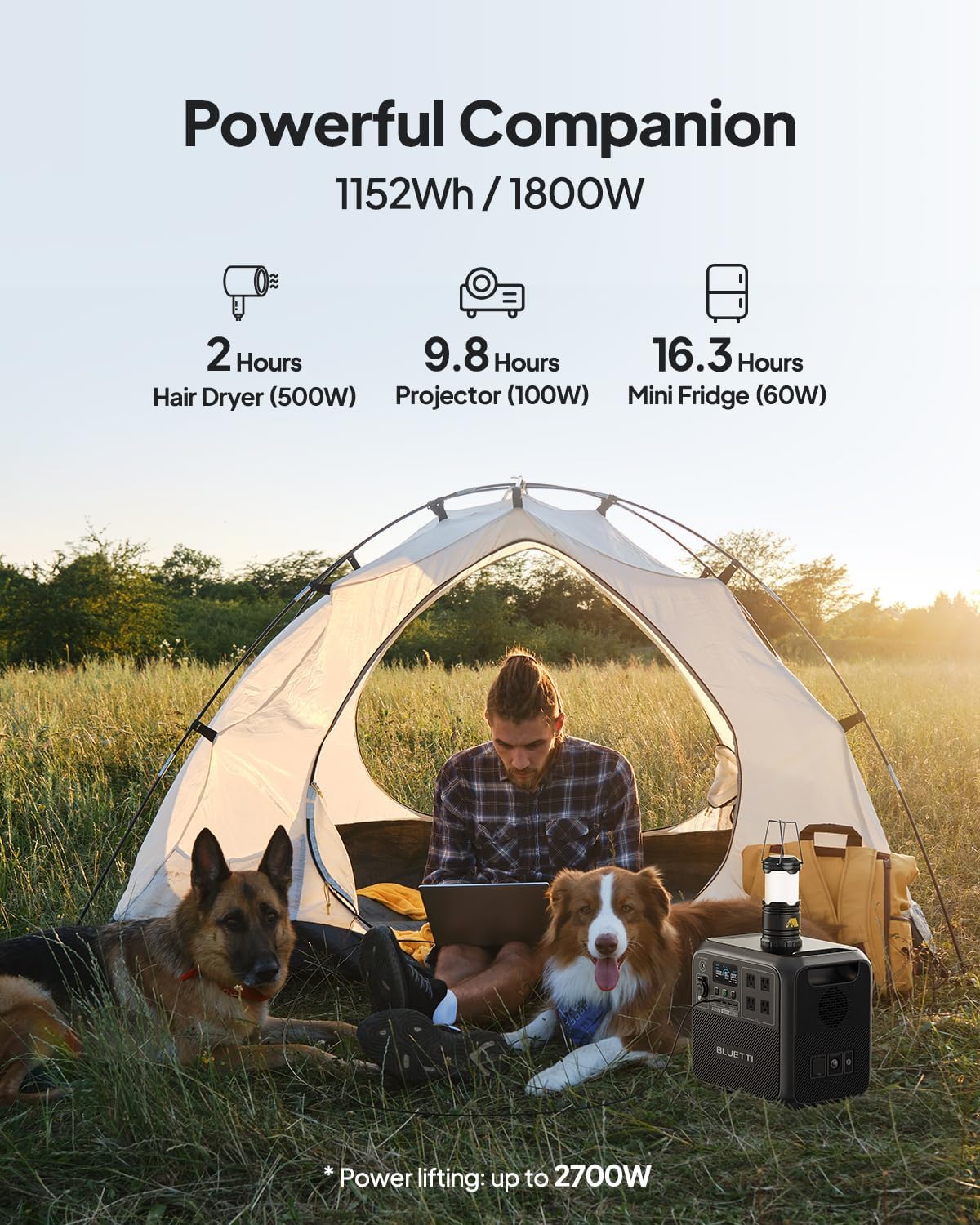

Power stations

Happy Valentine's

Programming

68.7K views

4 years ago

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++