Today's picks

Keychron K10 HE Hall Effect Keyboard, Gateron Double-Rail Magnetic Nebula Switch, Full-Size Tri-Mode Wireless Keyboard with Rapid Trigger, Adjustable Actuation, RGB, Aluminum + Wood Frame

Affiliate

$144.99

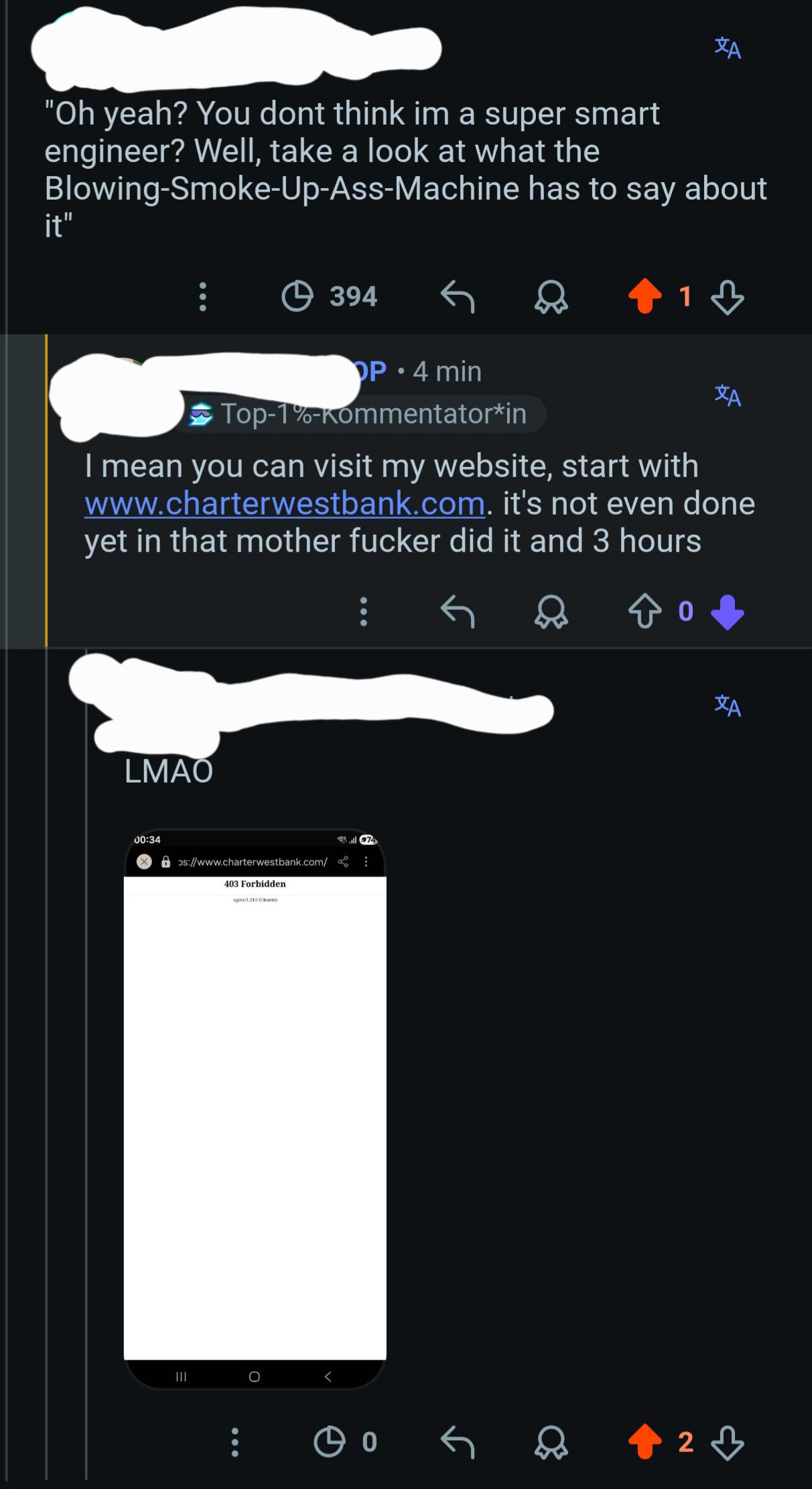

Junior Shocked Senior Rocked At Every Intense Situation

Debugging

250.6K views

1 year ago

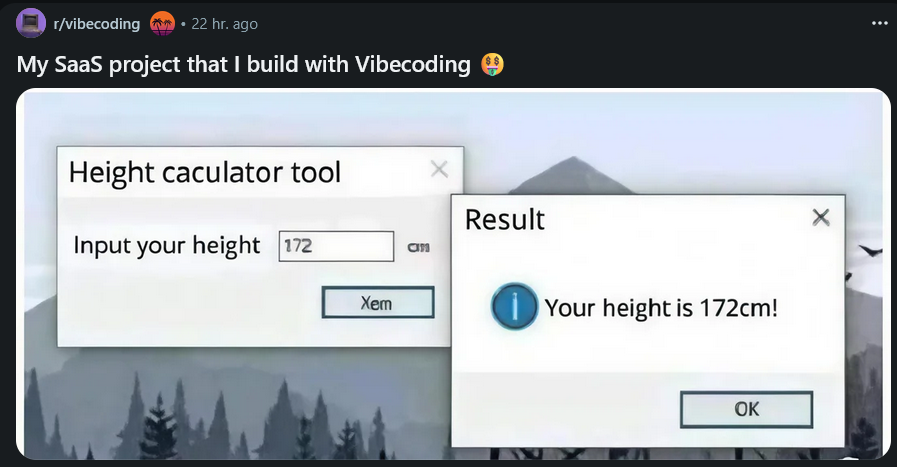

Dark mode for life.

Programming

74.5K views

2 years ago

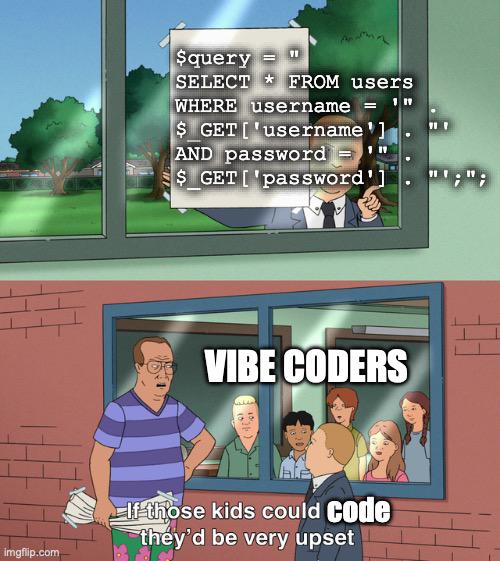

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++