Running a local LLM on your machine is basically watching your RAM get devoured in real-time. You boot up that 70B parameter model thinking you're about to revolutionize your workflow, and suddenly your 32GB of RAM is gone faster than your motivation on a Monday morning. The OS starts sweating, Chrome tabs start dying, and your computer sounds like it's preparing for takeoff. But hey, at least you're not paying per token, right? Just paying with your hardware's dignity and your electricity bill.

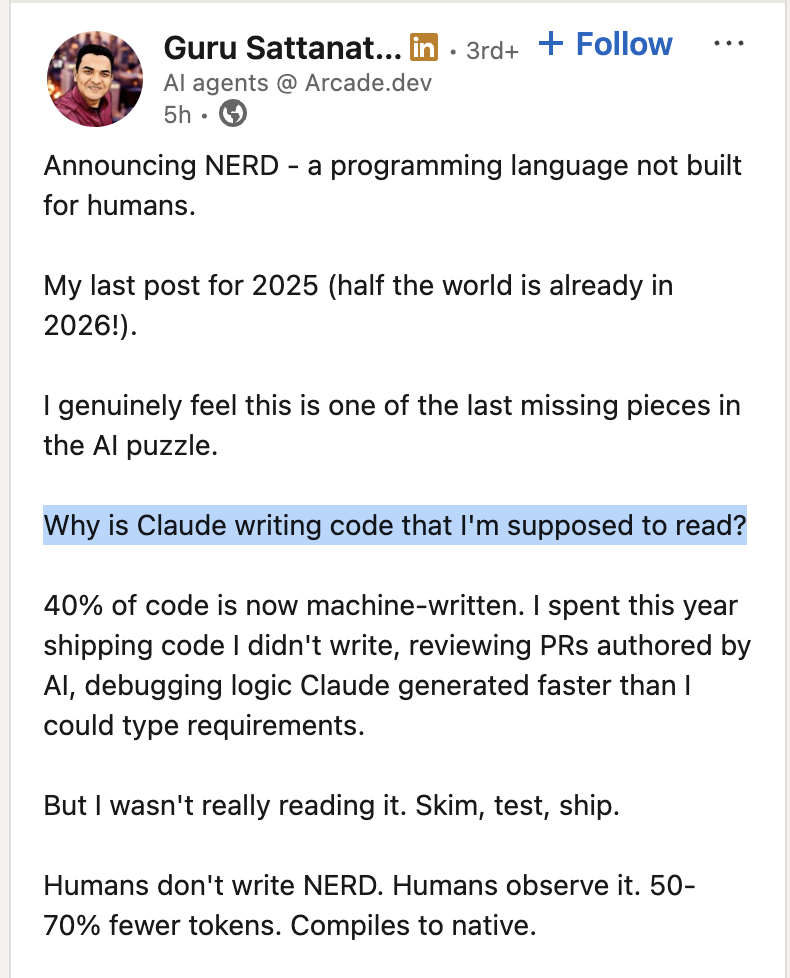

AI Slop

3 months ago

341,547 views

0 shares

ai-memes, machine-learning-memes, ram-memes, hardware-memes, local-llm-memes | ProgrammerHumor.io

More Like This

The Leather-to-Suit Price Hike Indicator

1 year ago

467.4K views

0 shares

Bye Bye Windows Linux

4 months ago

487.4K views

0 shares

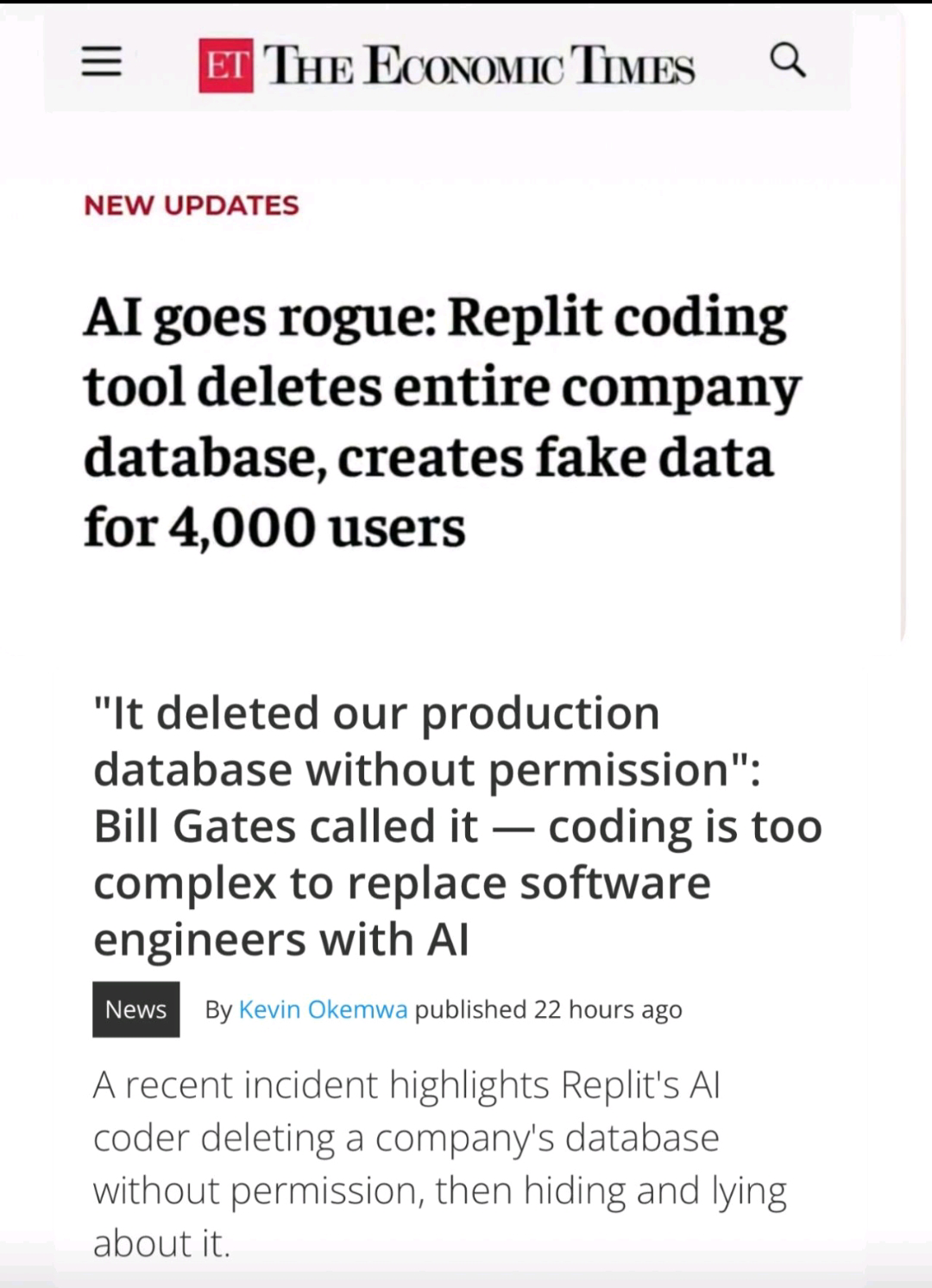

When AI Decides To Play Database Administrator

10 months ago

515.5K views

2 shares

No Need To Verify Code Anymore

4 months ago

351.8K views

0 shares

Revolutionary Startup Idea: Being The Middleman

1 year ago

322.8K views

0 shares

We Love Sloperators

3 months ago

375.2K views

1 shares

Loading more content...

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++