Today's picks

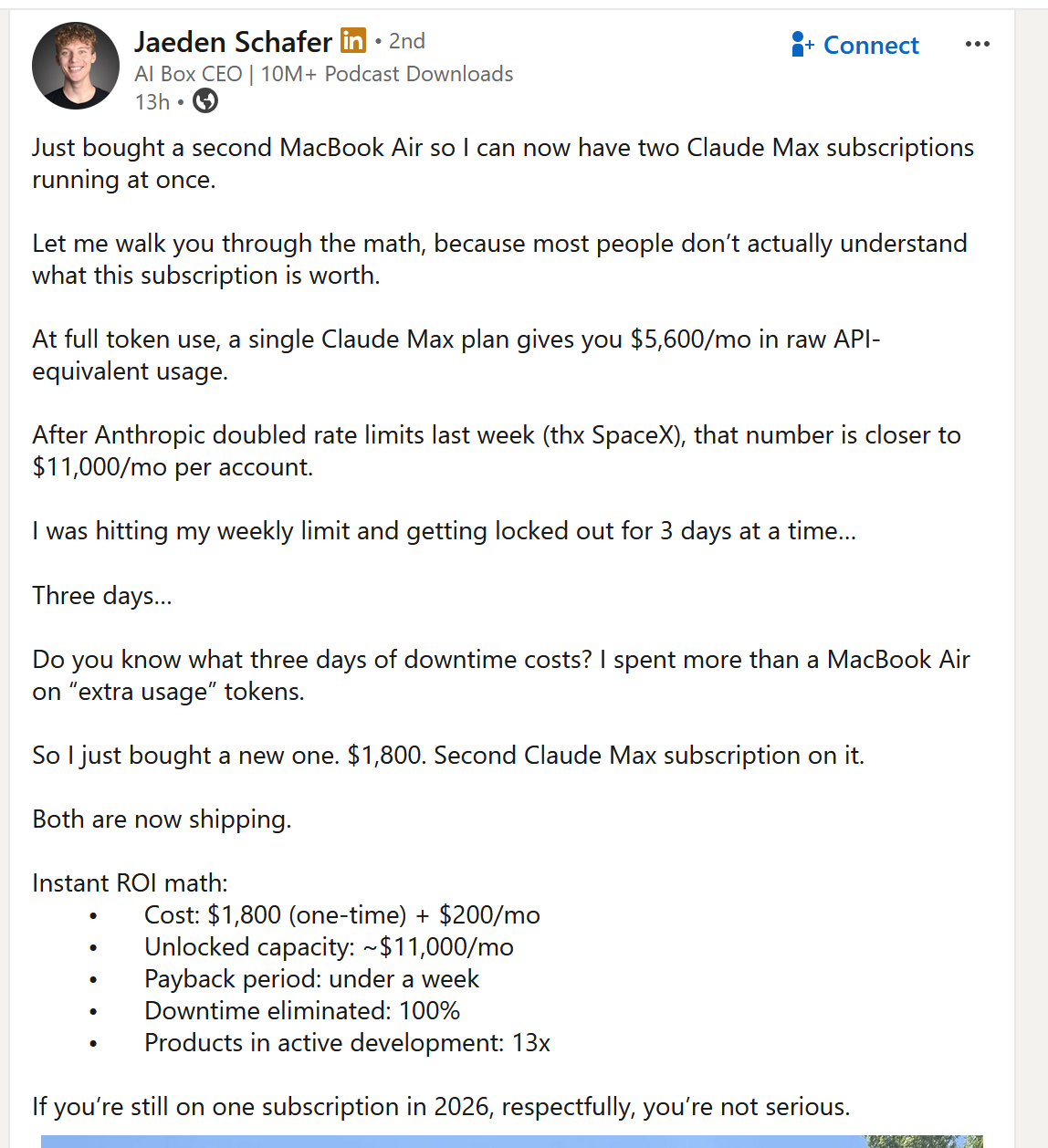

Genuinely Can't With These People

Cloud

2.2M views

3 days ago

Because They Cant C

Python

103.2K views

1 year ago

FIFINE Studio Monitor Headphones for Recording, Wired Headphones with 50mm Driver, Over Ear Headset with Detachable Cables 3.5mm or 6.35mm Jack, Black, on PC/Mixer/Amplifier-H8

Affiliate

$34.99

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp