Today's picks

WEP 927-IV Soldering Station Kit High-Power 110W with 3 Preset Channels, Sleep Mode, LED Magnifier, 5 Extra Iron Tips, Tip Cleaner, 2 Helping Hands, Tip Storage Slots, Lead-free Solder Wire, Tweezers

Affiliate

$51.99

Average Production Cycle

Testing

77.6K views

1 year ago

jQuery strikes again

Javascript

163.4K views

4 years ago

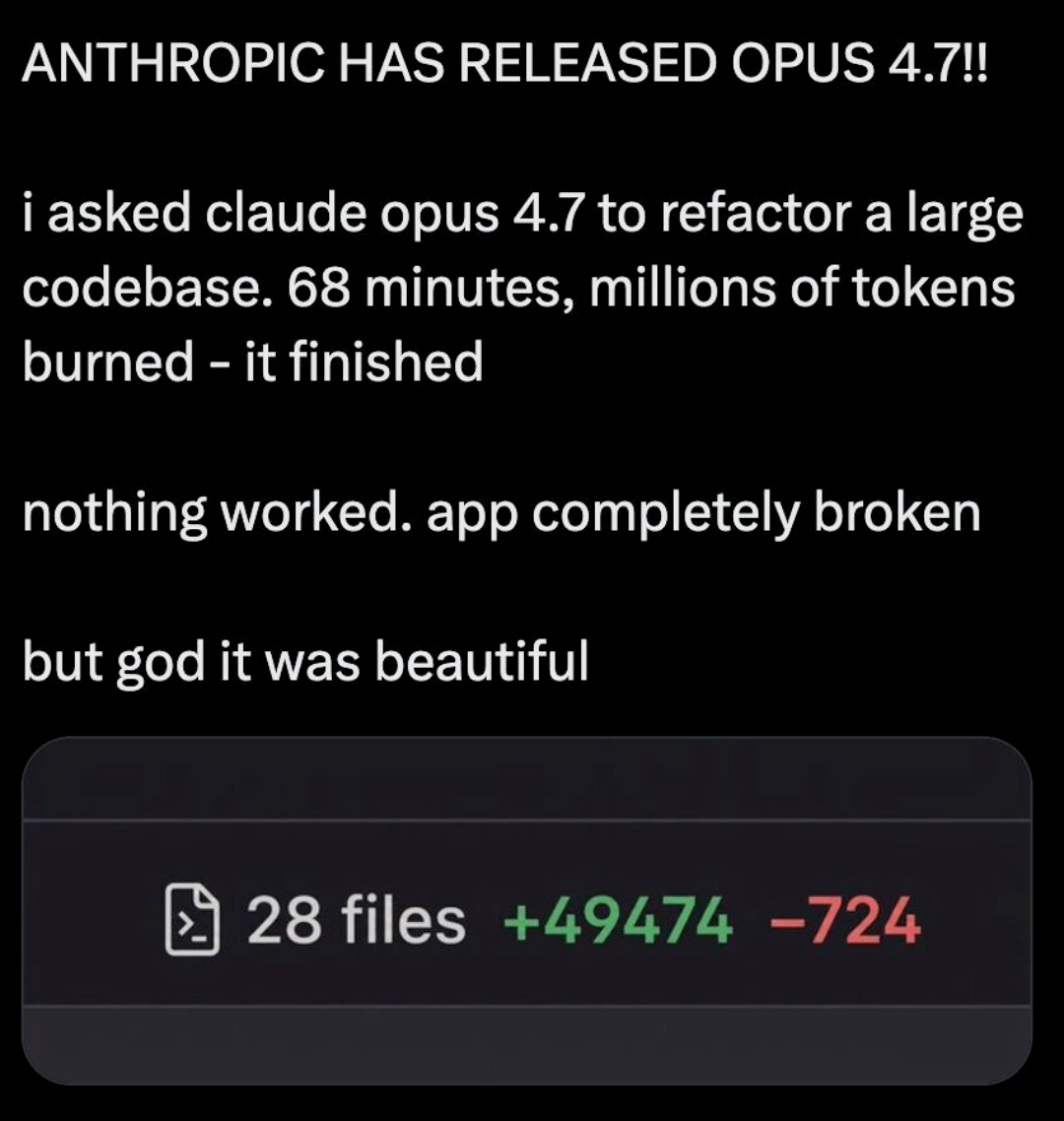

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp