Today's picks

Only Option Remaining

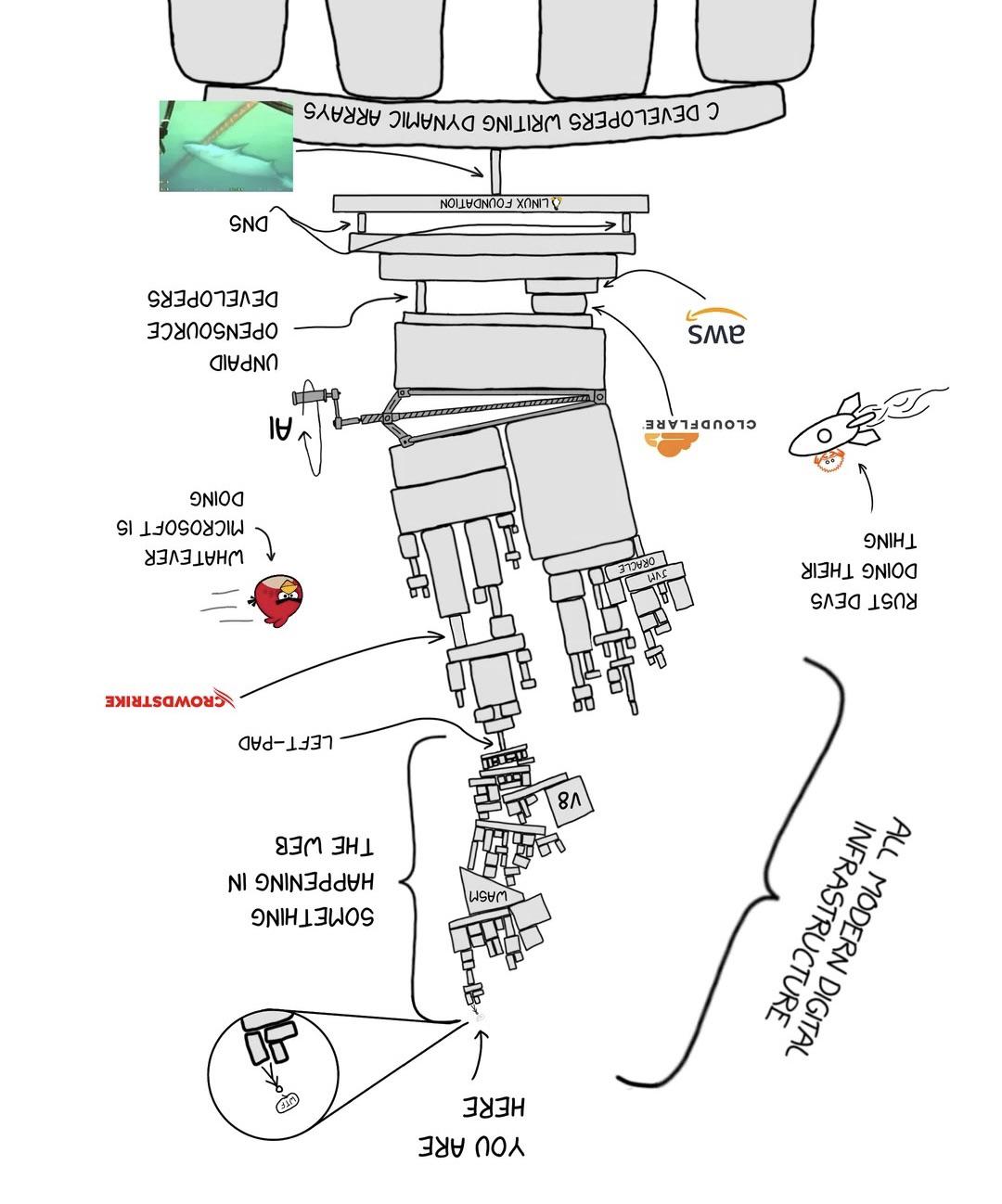

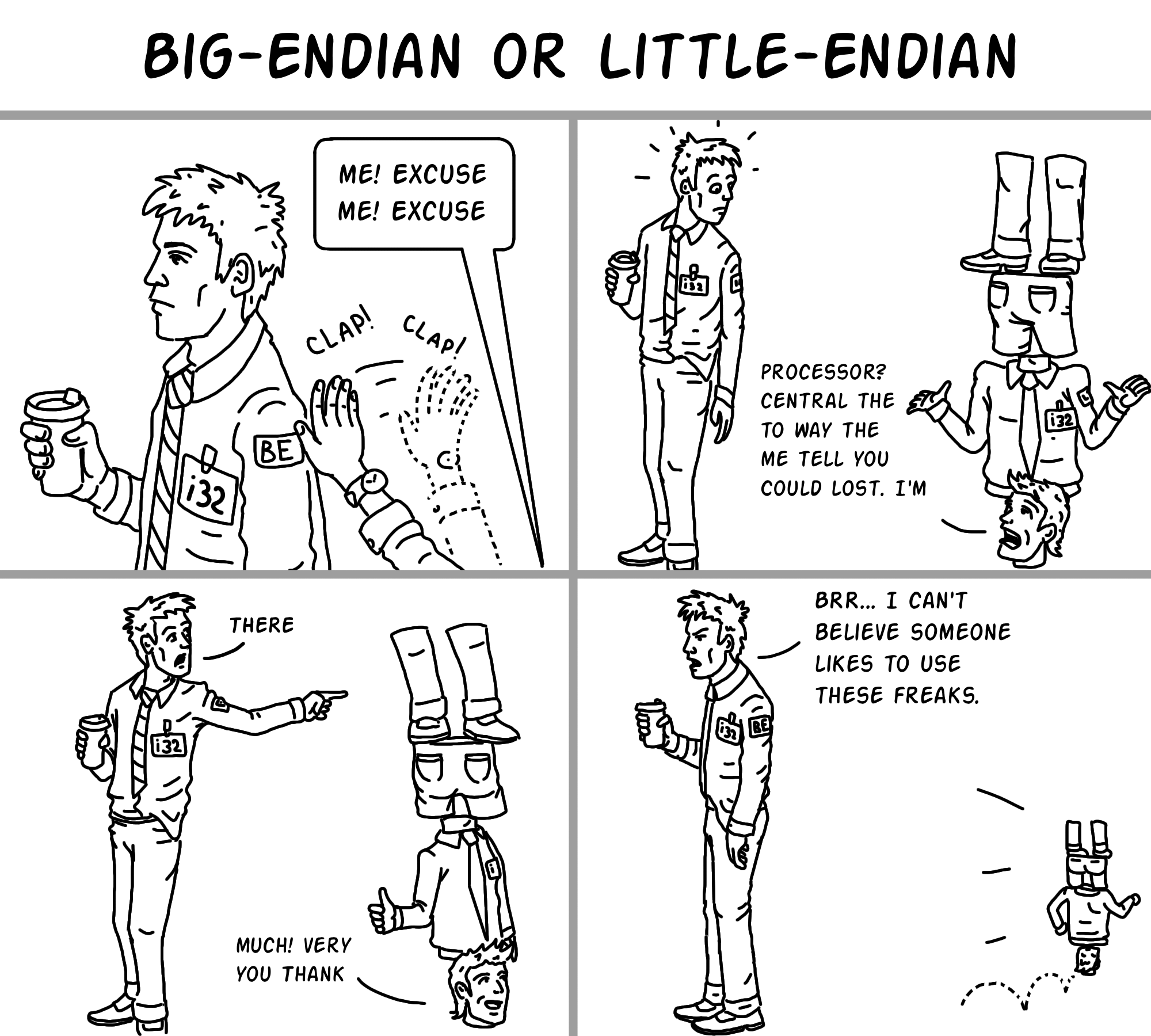

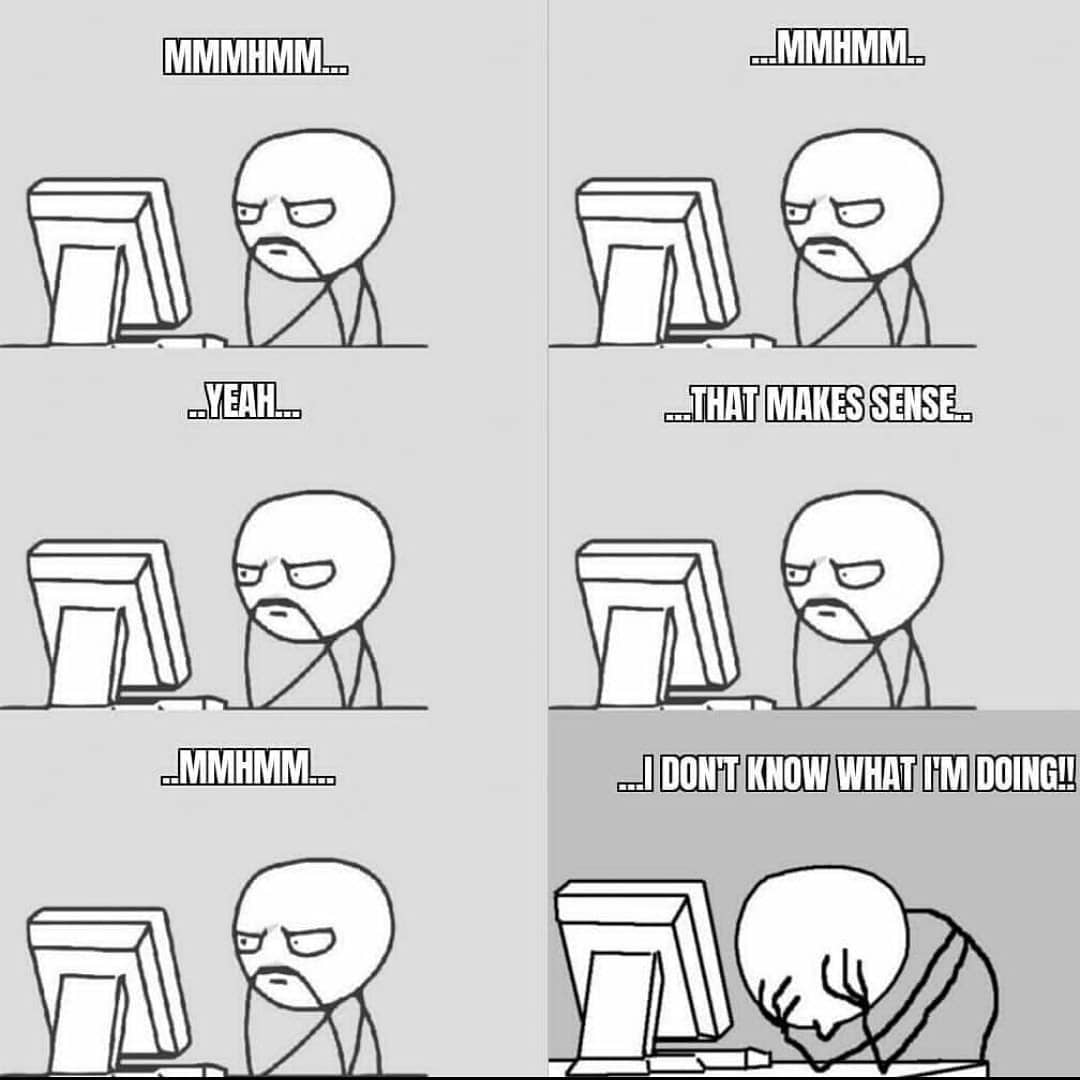

Programming

19.3M views

3 days ago

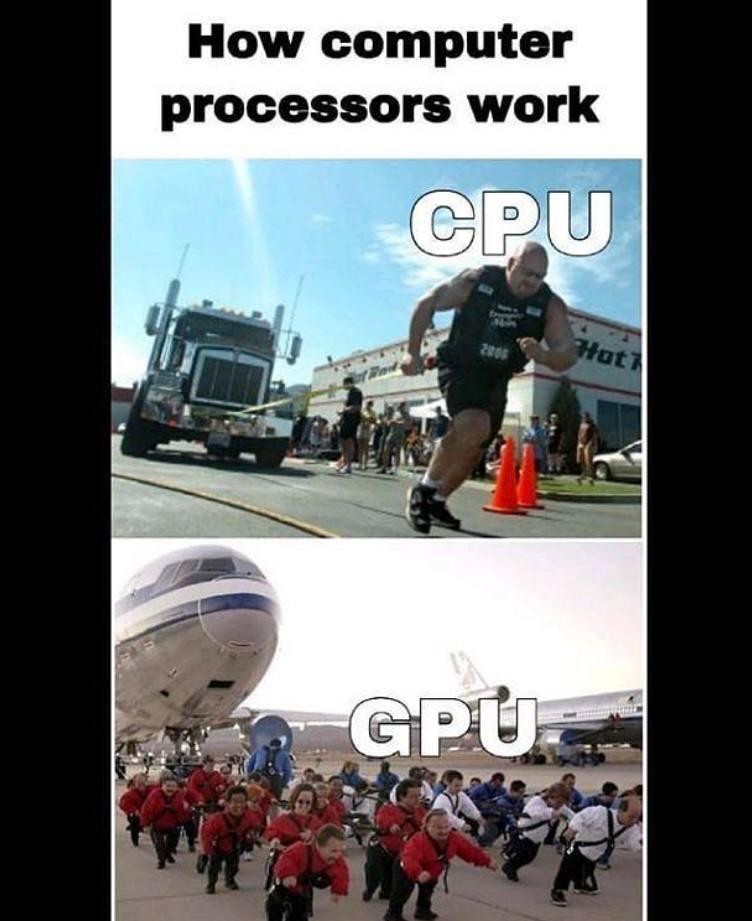

The anger moment on every Monday

Programming

79.3K views

4 years ago

Ducky One 3 Mini Aura 60% Mechanical Keyboard: Quack Mechanics Dampening, Hot-Swappable Cherry MX Red Switches, High-Density PBT Tripleshot Keycaps, RGB, US, White

Affiliate

$99.00

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++