Today's picks

MINIX K1 USB C KVM Switch 1 Monitors 2 Computers, 4K@120Hz HDR, 100W PD 3.0, Dual USB-C Input KVM Switches, Share Keyboard & Mouse, Aluminum Design, Compatible with Windows, Mac, Linux, Android

Affiliate

$41.90

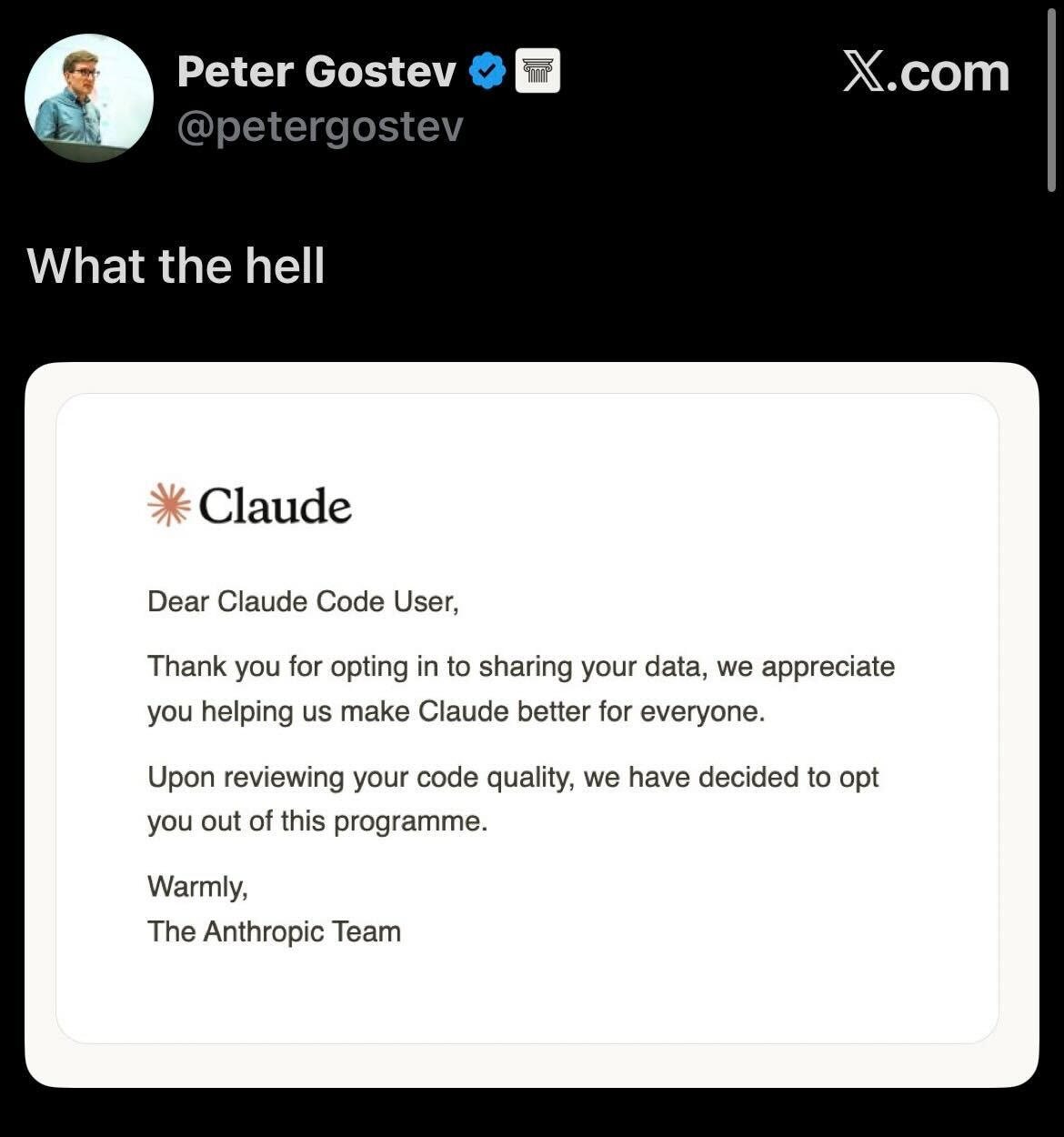

I might be...

Programming

66.0K views

2 years ago

GearScouts.com

Sponsored

Power stations

AI

AI

AWS

AWS

Agile

Agile

Algorithms

Algorithms

Android

Android

Apple

Apple

Bash

Bash

C++

C++

Csharp

Csharp